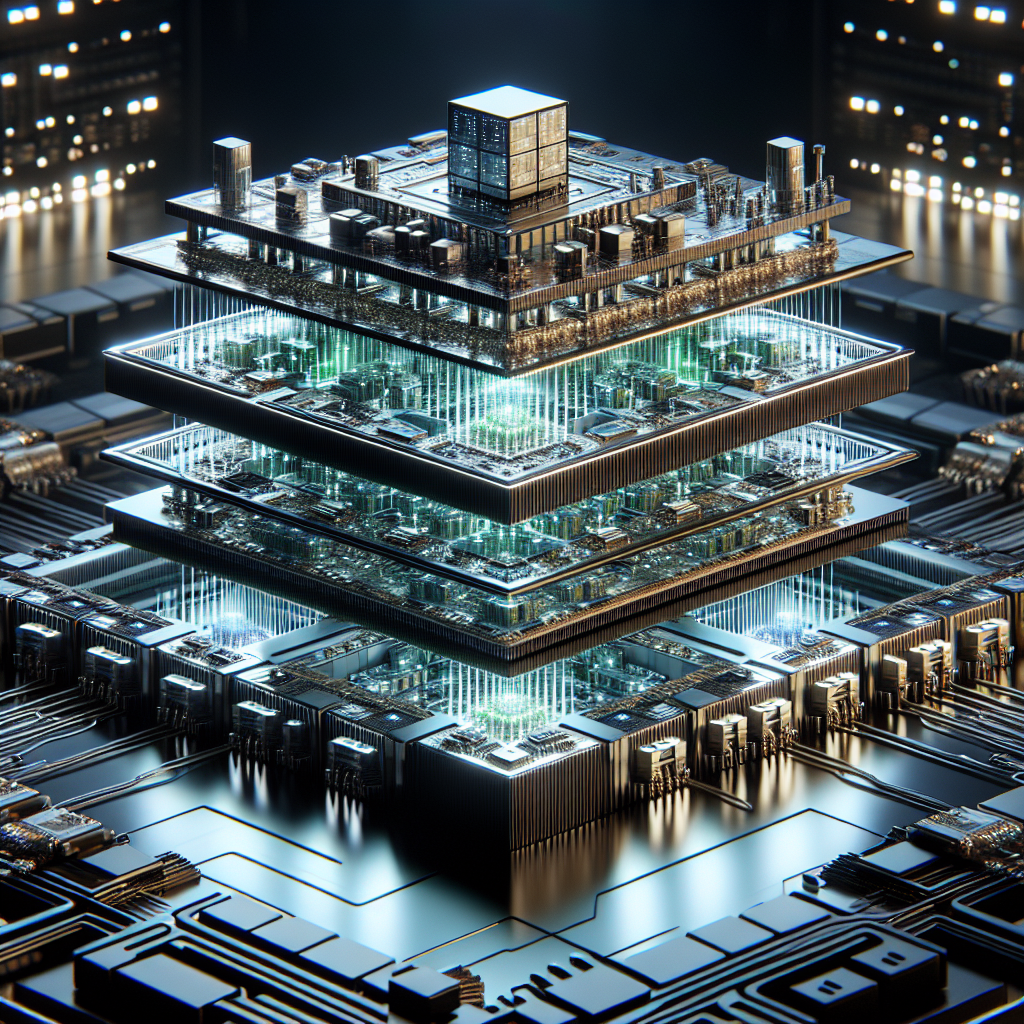

As we delve into the world of artificial intelligence, one remarkable innovation stands out: NVIDIA’s Rubin platform. This advanced AI system, known also as Vera Rubin, is set to revolutionize how businesses approach large-scale AI workloads. By ingeniously combining six sophisticated chips into a unified AI supercomputer, Rubin promises unprecedented efficiency and scalability, making it a game-changer in the evolving landscape of AI technology.

The main allure of Rubin lies in its ability to process trillion-parameter models with remarkable ease. This high performance is bolstered by NVIDIA’s latest innovations, such as NVLink 6, which provides an impressive 260 Tbps interconnect bandwidth, alongside HBM4 memory delivering over 1500 Tbps bandwidth. Together, these advancements significantly enhance performance while reducing latency, essential for complex AI workloads that businesses are increasingly adopting.

In fact, Rubin’s architecture is not just about speed and performance; it brings substantial cost reductions as well. Businesses could see their hardware requirements slashed by up to four times compared to previous architectures, thereby cutting token inference costs by an astounding 90%. This financial efficiency translates directly into significant infrastructure savings, making Rubin an appealing option for organizations looking to optimize their costs while adopting cutting-edge AI technologies.

However, with all great innovations come inherent challenges. One of the primary concerns surrounding the Rubin platform is its high energy demands, which could raise operational costs for businesses relying on its capabilities. Additionally, the dependency on NVIDIA’s ecosystem presents some risks and complexities, especially for organizations that have built their infrastructures around alternative platforms. Managing trillion-parameter models introduces further challenges, necessitating robust observability pipelines and strategic planning to ensure seamless operation and integration into existing workflows.

Scheduled for release in late 2026, with an advanced version expected a year later, the Rubin platform gives companies a roadmap to follow. By preparing through infrastructure evaluations, exploring integration strategies, and investing in team training, organizations can position themselves to take full advantage of this transformative technology. The phased rollout also provides a unique opportunity for businesses to assess their readiness and adapt their operations to accommodate the new system.

This important innovation shifts the narrative from merely achieving faster AI inference to fundamentally redefining how AI workloads are processed. The emphasis on both efficiency and scalability suggests that organizations will be able to tackle larger and more complex challenges than ever before, perhaps heralding a new era in AI deployment across various industries.

By embracing the advancements brought forth by the Rubin platform, companies can realize the potential to enhance a range of sectors, from natural language processing to autonomous systems. The improved performance and cost-effectiveness are attractive to business leaders and investors alike, making it crucial for executives to stay informed about this development.

In conclusion, NVIDIA’s Rubin platform represents a significant leap forward in AI hardware technology. While it offers revolutionary advantages in efficiency, scalability, and infrastructure savings, it also brings new challenges that require careful consideration and preparation. By exploring the full potential of Rubin, organizations can empower themselves to lead in AI-driven innovation, ensuring they remain competitive in a rapidly changing technological landscape.

Leave a Reply