-

Dental Intelligence Announces Key Leadership Promotions to Support Continued Growth

In an important strategic move, Dental Intelligence has announced the promotion of two key executives, Dan Larsen and Meghan Emswiller, as the company gears up to scale its operations and strengthen the leadership team. This decision reflects the ongoing growth and commitment to innovation within the organization, a provider of robust practice performance solutions for the dental industry.

Dan Larsen, now serving as Chief Product Officer, has successfully transitioned from his previous role as Senior Vice President of Product. His elevation to this key position signals a dedication to advancing Dental Intelligence’s product strategy and further developing innovative solutions that not only meet today’s challenges for dental practices but anticipate future needs. In his new capacity, Larsen is enthusiastic about continuing the development of products that significantly enhance performance and patient care.

According to Larsen, “I’m excited to step into this expanded role and continue building products that make a real difference for dental practices.” His focus on a customer-oriented approach has been pivotal in reinforcing Dental Intelligence’s standing as the industry’s exclusive end-to-end practice performance solution provider, with a client base spanning thousands of dental practices across the nation.

Meanwhile, Meghan Emswiller has transitioned from Vice President of Human Resources to Senior Vice President of Human Resources. Her promotion acknowledges her essential role in nurturing a people-first culture within the organization. Emswiller’s leadership in establishing HR initiatives has been vital in creating a thriving work environment, which is crucial for supporting the company’s sustained growth trajectory.

Reflecting on her new role, Emswiller stated, “I’m incredibly honored to continue supporting the amazing people who make Dental Intelligence such an exceptional organization.” Her commitment to fostering employee development and implementing programs that attract top talent are critical as Dental Intelligence continues its ambitious expansion in the competitive dental industry.

These leadership changes are not merely administrative; they represent a strategic approach to cultivate a robust and adaptive organization that prioritizes innovation and customer satisfaction. The focus on growing leadership talent is indicative of Dental Intelligence’s foresight into the evolving dynamics of the dental sector, where responsive, data-driven management is essential.

As the only complete practice performance solution in dentistry, Dental Intelligence has consistently aimed to enhance practices’ efficiency. Their offerings encompass various aspects, including analytics, patient engagement, and insurance management—bringing actionable insights and automation together. This holistic approach aims to increase vital metrics such as production, patient visits, and collections while minimizing overhead costs.

The leadership team’s experienced vision is expected to keep pace with industry developments, ensuring that Dental Intelligence remains ahead by delivering high-quality, tailored solutions that meet the unique demands of dental practices today and in the future. These promotions are a testament to the company’s commitment to investing in talent capable of steering the organization through its next phase of growth.

As Dental Intelligence continues to evolve, the enhanced roles of Larsen and Emswiller will likely have far-reaching implications not only for the company but also for the wider dental industry, where optimized performance solutions are becoming increasingly vital. Their strategic importance in reinforcing the organizational structure is pivotal as Dental Intelligence embarks on its ambitious growth agenda, positioning itself for further innovation and market leadership.

In summary, Dental Intelligence’s recent promotions reflect strategic foresight and a commitment to nurturing leadership that aligns with its vision of providing exceptional solutions in the dental sector. With these key appointments, the company is well-equipped to navigate the complexities of the dental industry, continually pushing the envelope on what practice performance can achieve.

-

Revolutionizing AI Development? Here’s What You Need to Know

Imagine a world where artificial intelligence systems not only respond to prompts but possess the ability to truly understand and adapt to tasks autonomously. This concept is no longer a distant dream; it’s emerging as a pivotal evolution in AI development. The shift from merely prompting AI to teaching it represents a significant transformation, enabling a new era of AI functionality. With a structured and scalable approach to AI training, developers can empower systems to grow, adapt, and problem-solve with greater independence.

Traditionally, AI systems have operated within the confines of static commands, requiring frequent adjustments and meticulous supervision by developers. However, with the introduction of innovative methodologies like the Agent Development Kit (ADK), professionals can create more intelligent and efficient AI capabilities. This approach fosters the development of reusable skills tailored to a myriad of tasks. By adopting a teaching paradigm, we unlock an incredible potential for AI to comprehend its roles substantially, moving beyond mere execution to genuine understanding and interaction.

Transforming AI Functionality Through Teaching

The core principle behind this transformation lies in the concept of teaching AI through structured methodologies, rather than relying on exhaustive prompt engineering. The ADK provides a framework that facilitates the development of task-specific skill packages. For instance, imagine the application of AI in customer support, where intelligent systems could handle inquiries, product searches, and order tracking with remarkable efficiency. By focusing on specific tasks and developing streamlined skill packages, the potential for enhancing customer experiences and operational workflows becomes enormous.

One of the key takeaways from this new approach is the emphasis on less manual intervention in AI systems. By integrating these structured techniques, businesses can enhance real-time recommendations, automated responses, and secure user authentication. For example, using tools like Clerk, AI can not only comprehend user queries but also adapt its responses based on historical interactions, ultimately leading to improved customer satisfaction.

Efficiency Through Multi-Agent Frameworks

Another aspect of this innovative development involves establishing multi-agent frameworks comprising both parallel and sequential agents. These frameworks significantly improve the functionality and efficiency of AI systems, enabling them to tackle complex multitasking environments effectively. The ability to harness multiple agents in synergy allows for a robust operational structure where AI can manage diverse tasks without losing focus or efficacy.

Moreover, the integration of structured datasets and task-specific tools equips AI agents to perform precise functions, such as managing product information, answering customer queries, and overseeing return processes. Such capabilities do not simply enhance operational efficiency but also contribute to elevated customer satisfaction levels. Entrepreneurs and business leaders can envision the operational landscape shifting as companies become increasingly reliant on advanced AI systems that reduce manual workload while amplifying productivity.

The ADK promotes iterative development and the seamless integration of company-specific workflows, expanding AI applications across varied fields, including logistics, HR automation, and even code review processes. This adaptability drives innovation and significantly boosts overall productivity—realizing AI’s potential to revolutionize traditional approaches to business.

Structured Learning for Effective AI

The framework provided by the ADK focuses on developing comprehensive hierarchical documentation that allows AI to hone in on relevant information while steering clear of overwhelming data. This characteristic ensures that AI can concentrate on what truly matters, fostering an environment where productivity flourishes and machine learning expands effectively.

To illustrate, let’s consider a practical application: creating a multifaceted customer support AI. A well-structured skill package could include quick-start guides and reference materials, ensuring immediate task execution without overload, thereby allowing seamless customer interactions and satisfactory resolutions.

In summary, the shift from simply prompting AI to teaching it through advanced frameworks like the ADK signifies a major leap forward in AI development. Business leaders and product builders should take note of these transformative methodologies, as they represent an unparalleled opportunity to engage AI as a genuine partner in operational excellence. As industries continue to evolve, harnessing these innovative capabilities could set the stage for significant advancements in numerous sectors.

Conclusion

As we embrace this new era of AI development, the question remains: what could we achieve if our AI systems not only responded but genuinely understood their functions? The possibilities are vast, and the time to explore them is now.

-

Walmart Adds AI-Powered In-Store Search, Shopping List and Party Planner

Walmart has unveiled a suite of new artificial intelligence (AI) tools aimed at enhancing customer experience during the bustling holiday shopping season. These innovations, now live on Walmart’s app and website, are designed to streamline the shopping process, making it easier for customers to find and purchase items both in-store and online.

One of the standout features is the In-Store Savings function within the Walmart app. This feature allows shoppers to see which items are on sale at their specific location, making it more efficient to budget and save while shopping. In addition, an enhanced in-store search feature has been introduced, enabling users to check product availability and locate items within the store. The app even sorts shopping lists by aisle, effectively guiding consumers through their shopping experience.

Walmart’s AI digital assistant, Sparky, has taken on a new role as a virtual party planner. When users share details about upcoming events, Sparky curates a personalized list of necessary items, simplifying the planning process for gatherings and celebrations. This feature reflects Walmart’s broader vision of integrating AI into everyday consumer experiences, enhancing convenience and customer satisfaction.

In addition to these features, Walmart has introduced AI-generated audio clips that summarize premium beauty product descriptions, catering to customers who appreciate concise and informative insights while shopping. The Shop the Background feature allows users to click on items featured in product images and add them directly to their cart, while the Dynamic Showroom feature lets customers visualize different room setups by selecting their preferred furnishings.

Walmart executives, including Senior Vice President of Shopping Experiences, Tracy Poulliot, have expressed enthusiasm for the positive impact these tools are having on shopping behavior. According to Poulliot, users who engage with the app while shopping in-store tend to spend 25% more on average than those who do not utilize the app. This statistic underscores the effectiveness of leveraging AI technology to enhance customer engagement and drive sales.

As AI shopping adoption continues to rise, with a recent survey indicating that 32% of respondents are willing to use generative AI for shopping, Walmart’s commitment to improving its digital offerings aligns perfectly with consumer trends. CEO Doug McMillon highlighted the importance of AI during a recent earnings call, emphasizing the retailer’s ongoing focus on improving and scaling Sparky to provide a more personalized shopping experience.

The integration of these AI tools marks a significant advancement in Walmart’s approach to retail, showcasing how technology can transform the shopping experience. By prioritizing user-friendly features that assist in both in-store and online shopping, Walmart is setting a new standard for customer engagement in the retail sector.

Although details regarding the implementation and future enhancements of these AI features remain to be fully explored, the launch signifies a pivotal moment in the intersection of technology and retail, with concrete implications for business leaders and product developers aiming to adapt in an increasingly digital marketplace.

-

Gov’t to deploy AI algorithm in tax collection to add 2.5 million new taxpayers

The implementation of artificial intelligence in tax collection by government agencies represents a significant shift in how taxation is approached, aiming to increase efficiency and broaden the tax base. A recent announcement detailed plans to deploy an AI algorithm specifically designed to identify and register an estimated 2.5 million potential taxpayers, individuals who currently operate outside the formal payroll system.

This initiative is particularly relevant for professionals such as doctors in private practice who may not have formal employment contracts, yet earn income comparable to their counterparts in established positions. The logic behind this endeavor rests on the principle that individuals within the same labor market—sharing similar education, skills, and living in the same areas—are likely generating similar income levels. By focusing efforts on these underrepresented groups, governments can not only enhance revenue collection but also create a more equitable tax system.

The AI algorithm in question aims to leverage existing data to pinpoint these individuals. The technology can analyze various indicators—such as residential address, professional networks, and economic activities—to create a profile of non-taxpayers who have the capacity to contribute to government finances. This strategy represents an intersection between advanced technology and public policy, showcasing how innovations in AI can have far-reaching implications for governance and economic development.

Furthermore, this move is part of broader efforts to modernize tax administration systems globally. As governments face increasing pressures to fund public services amid economic challenges, adopting AI technologies provides a pragmatic solution for tax compliance and enforcement. The shift towards digital infrastructure in tax collection could also streamline processes, reducing costs associated with traditional methods and improving accuracy in revenue estimates.

The anticipated 2.5 million new taxpayers could significantly impact government budgets, offering a potential increase in funding for essential services such as healthcare, education, and infrastructure. As these stakeholders are integrated into the tax system, they would be contributing their fair share while benefitting from the public services funded by this revenue. This model of inclusive economic participation could help foster a more invested citizenry, as individuals see the return on their contributions through enhanced public services.

However, the deployment of AI in tax collection also raises important considerations regarding privacy and data security. The collection and analysis of personal information necessitate robust safeguards to protect individuals’ rights and prevent misuse of data. Ensuring transparency in how data is utilized and providing avenues for recourse are crucial for maintaining public trust in these initiatives.

Additionally, while AI systems can identify potential tax liabilities, human oversight will remain critical in evaluating the accuracy of these findings. Governments must balance technology’s capabilities with expert judgement to ensure fair treatment of individuals and prevent any potential misclassification or errors in judgement.

In conclusion, the government’s decision to employ AI algorithms in tax collection exemplifies how technology can serve as a tool for economic expansion and fairness. By targeting untapped taxpayer populations, it not only aims to increase revenue but also emphasizes the importance of social equity in the tax system. This approach aligns both technological advancement and public service needs, signaling a forward-thinking shift in tax policy that could potentially inspire similar initiatives worldwide.

-

RIL, Google partner to offer free Gemini AI plan to Jio users

In a groundbreaking initiative set to redefine artificial intelligence accessibility in India, Reliance Industries Ltd (RIL) and Google have announced a strategic partnership that aims to broaden AI adoption among Indian consumers. As part of this collaboration, Google will provide its premium Gemini Pro plan, valued at Rs 35,100, free for 18 months to eligible Jio users. This generous offer represents a significant investment in empowering a diverse user base with advanced AI capabilities.

The package not only includes access to Google’s sophisticated Gemini 2.5 Pro model for enhanced image and video generation but also incorporates expanded features like 2 TB of cloud storage and availability of Notebook LM for educational purposes. This presents a unique opportunity for Jio users, particularly those aged 18 to 25, to leverage high-quality AI tools for creativity and research.

Rolling out through the MyJio app, the service is set to launch initially for users on unlimited 5G plans, encompassing a swift nationwide expansion to reach all Jio customers. RIL emphasizes the intent to democratize access to AI technology, thereby enhancing educational and creative opportunities for a significant segment of the population.

Moreover, RIL is also collaborating with Google Cloud, providing organizations access to advanced AI hardware accelerators known as Tensor Processing Units (TPUs). This strategic move is designed to enable businesses to train and deploy more complex AI models, ensuring that they can efficiently execute demanding projects. The alignment with India’s national AI strategy is clear, as RIL aims to bolster the country’s backbone in AI innovation, supporting the Prime Minister’s vision of positioning India as a global AI powerhouse.

In tandem with the rollout of the Gemini AI plan, the partnership also highlights the establishment of Reliance Intelligence as a key strategic partner for Google Cloud. This collaboration will drive the adoption of Gemini Enterprise, a versatile AI platform designed specifically for businesses. The benefits of Gemini Enterprise are substantial, offering organizations the tools necessary to empower teams in discovering, sharing, and running AI applications seamlessly within a secure environment.

Furthermore, Reliance Intelligence plans to enhance the offering by developing pre-built enterprise AI agents tailored for Gemini Enterprise users. This move will diversify the available AI solutions, providing users with access to both Google’s advanced agents and those from third-party developers. By galvanizing the creativity and innovation potential of Indian enterprises, RIL is positioning itself as a critical player in the AI ecosystem.

Mukesh D Ambani, the chairman of RIL, articulated the vision behind this collaboration, stating, “Through our collaboration with strategic and long-term partners like Google, we aim to make India not just AI-enabled but AI empowered – where every citizen and enterprise can harness intelligent tools to create, innovate and grow.” This reflects a broader commitment to inclusivity in leveraging technological advancements, further emphasizing RIL’s role in shaping a tech-driven future for the country.

Sundar Pichai, CEO of Google and Alphabet, echoed this sentiment, emphasizing the importance of expanding access to AI tools for consumers, businesses, and the vibrant developer community in India. The collaboration is not only a remarkable step forward in AI technology access but also sets the stage for transformative developments across various sectors in India.

In conclusion, this partnership between RIL and Google marks a significant chapter in the narrative of AI innovation in India. By offering free access to cutting-edge technologies, it aims to empower a vast population with tools that foster creativity and innovation. As the rollout progresses, the potential for improved educational outcomes, enhanced business efficiency, and overall AI fluency among the Indian populace becomes increasingly viable, positioning India to take a lead in the global AI landscape.

-

The Future of Geospatial Data : Google Earth AI in Action

The advent of Google Earth AI marks a pivotal moment in the world of geospatial data analysis, ushering in an era where complex planetary datasets become not just observed phenomena but tools for actionable insights. Imagine if the vast and intricate web of our planet’s data could not only reveal its secrets but transform lives, safeguard ecosystems, and influence urban dynamics—all in real-time. This is the mission behind Google Earth AI, a technological advancement that seeks to revolutionize how we decode the intricate layers of information that nature and humanity have woven together.

For decades, geospatial analysis was a labor-intensive endeavor, requiring immense resources and a considerable amount of time. Traditional methods rendered even the most critical data insights sluggish and reactive rather than proactive. However, Google Earth AI shifts this paradigm, leveraging the capabilities of artificial intelligence to streamline the analysis process. This innovative platform turns complex datasets into clear, actionable intelligence, allowing organizations to make smarter, faster decisions that can influence everything from disaster management to sustainable urban development.

In its mission to transform geospatial analysis, Google Earth AI has automated numerous complex tasks, fundamentally changing how industries approach significant global challenges. From identifying flood-prone regions within hours instead of months, to accurately pinpointing health vulnerabilities and deploying resources effectively, the technology enhances the speed, accuracy, and scope of geospatial insights.

One of the most prominent applications of Google Earth AI is its predictive analytics capability, which integrates both historical context and real-time data to forecast potential events such as hurricanes and floods. This proactive approach enables communities to prepare in advance, significantly reducing risks and minimizing damage. The implications for disaster management are profound—by anticipating emergencies, resources can be allocated more efficiently, and the impact on vulnerable populations can be mitigated.

Furthermore, this platform engineers a vital connection between geospatial data and health statistics, a specific application that could redefine how public health crises are managed. By mapping disease outbreaks and tracking health vulnerabilities, policymakers can allocate healthcare resources more effectively, especially during tumultuous times like pandemics. Combining spatial insights with health metrics offers an unprecedented advantage in responding to community health issues.

Real-time data processing is another cornerstone of Google Earth AI, enabling dynamic decisions that address immediate challenges. Whether monitoring deforestation impacts or responding to climate-related environmental changes, the platform equips users with the insights they need to act promptly and effectively. The ability to visualize these changes in real-time allows organizations to be agile and adaptive, qualities that are increasingly necessary in our rapidly evolving world.

Google Earth AI supports sustainable and resilient development by offering actionable intelligence that aids urban planning, environmental conservation efforts, and long-term policy-making. This innovative technology does not merely provide tools for understanding our world; it lays down a foundation for an informed and sustainable approach to its future. As we grapple with the pressing challenges of climate change and urbanization, embracing automation in geospatial analysis becomes increasingly vital for building the cities of tomorrow.

In summary, Google Earth AI exemplifies a transformative leap in how we interact with geospatial data. It signals the dawn of a new era where data is not just passively reported but dynamically utilized. By automating complex data analysis processes, this tool delivers quicker, more accurate, and scalable insights that can significantly impact various sectors such as disaster management, public health, and urban planning. The technology’s capacity to offer predictive insights tailored to current needs indeed positions it as a cornerstone for fostering a sustainable, resilient future.

-

Tagvenue Launches Tagvenue PRO, Bringing AI to Help Venues Win More Bookings

In a move that positions them as leaders in the event management space, Tagvenue has officially launched Tagvenue PRO, a cutting-edge, AI-powered platform designed specifically for streamlining venue management. Set to significantly transform the way venues handle bookings, Tagvenue PRO automates several cumbersome tasks involved in managing inquiries, follow-ups, contracts, and payments. With its launch on October 28, 2025, this platform aims to help venue managers work more efficiently while simultaneously increasing their booking rates.

Every month, over one million event planners use Tagvenue’s marketplace to discover and book from a selection of 18,000 verified venues across six countries. With the introduction of Tagvenue PRO, the platform extends its service offering beyond booking to include comprehensive venue management tools. This seamless integration of automation and insights equips managers with the tools necessary to thrive in a highly competitive market.

One of the standout features of Tagvenue PRO is its intelligent automation capabilities. The platform not only automates lead responses but also composes follow-ups and centralizes communication from various channels, thereby reducing the workload for venue operators. Integrated tools that manage payments, including partnerships with services like Stripe, further enhance the efficiency of the platform.

The Tagvenue PRO dashboard provides valuable data insights, enabling managers to pinpoint where leads originate from, track conversion rates, and make informed decisions based on solid analytics. Artur Stepaniak, co-founder of Tagvenue, emphasized the importance of this feature, stating, “Venue managers juggle hundreds of details at once, from answering inquiries to closing contracts. Tagvenue PRO was created to relieve that pressure. It’s an intelligent assistant that works alongside you, ensuring that no message or opportunity slips through the cracks.” This insight highlights the platform’s commitment to improving operational efficiency.

The hospitality industry has increasingly recognized the significant role automation plays in business growth. According to hospitality forecasts for 2024, employing automated systems can enhance revenue and productivity in incredible ways. For example, studies indicate that AI-assisted booking systems can increase conversion rates by up to 20%, while simultaneously reducing administrative time by nearly 30%. This underscores the necessity for tools like Tagvenue PRO that not only simplify operations but also provide tangible results.

Early reviews from users of Tagvenue PRO are overwhelmingly positive, with many reporting substantial time savings and increased booking efficiency. One venue manager remarked that the platform’s automation “learns your communication style,” producing responses that resonate with the brand’s voice, thus maintaining an authentic customer experience. Another user praised the platform’s simplicity, noting, “It’s not rocket science. It’s very easy to use, and that’s a huge plus.” Feedback like this reinforces Tagvenue’s goal of equipping venues, regardless of their size, with user-friendly tools that help them succeed.

As the market for event venues continues to evolve, the tools that businesses use to manage their operations must evolve too. Tagvenue PRO is designed not just as a management tool but as a comprehensive solution that integrates seamlessly with the daily operations of venues. By leveraging AI technology, Tagvenue is setting a new standard for venue management, providing a much-needed lifeline for venue managers who are often inundated with inquiries and administrative tasks.

For anyone interested in exploring Tagvenue PRO, further information can be found at tagvenue.com/pro. The platform is poised to empower venue managers with the resources they need to maximize their booking potential and streamline their operations effortlessly.

-

I used the Beelink SER9 Pro mini PC’s AI Voice Kit, and it certainly aided day-to-day tasks in the office

The Beelink SER9 Pro mini PC represents an advanced step in office productivity tools that incorporates a unique AI voice kit designed to enhance user interaction. This powerful machine is making headlines in the tech community not just for its performance but also for its innovative features that promise to revolutionize how professionals use mini PCs in their daily tasks.

With a premium all-metal casing, the Beelink SER9 Pro carries a modern aesthetic that speaks volumes about its build quality. Targeted towards both office productivity and mid-level content creation, it combines the necessary power and upgradeability that users demand. The standout feature is the AI voice kit, which comes equipped with a microphone and speakers that optimize vocal pickup. This integration supports language models like ChatGPT, enhancing the machine’s capabilities in processing voice commands and facilitating a smoother interaction with AI applications.

During testing, the Beelink SER9 Pro exceeded expectations with its handling of native Windows 11 Pro applications, providing seamless performance across tasks. The initial setup process was straightforward, and users can expect a quick transition to office software such as Microsoft Office. Notably, while engaged in video and image editing with applications like Adobe Premiere Pro, DaVinci Resolve, and Adobe Photoshop, the mini PC displayed impressive speed and responsiveness, particularly benefiting from AI enhancements that allowed for more efficient generative editing functionalities.

However, the performance of the SER9 Pro is not without its limitations. The machine’s configuration includes 32GB of LPDDR5X-6400 RAM—which, while soldered for speed, can feel somewhat limiting during intensive tasks. Users have reported that while handling HD and some light 4K editing is feasible, render times in DaVinci Resolve can lag, indicating that larger workloads could stress the system. Such intricacies are vital considerations for content creators who intend to use this machine for extensive projects.

An important challenge noted during content creation was the relatively small 1TB SSD capacity. While sufficient for many applications, transferring 4K footage to the internal storage quickly occupies significant space, necessitating an upgrade. Fortunately, the SER9 Pro accommodates dual M.2 slots for storage expansion. Users can easily install a second SSD, such as a 4TB Samsung 9100 Pro, drastically expanding the available storage to a more manageable level.

Additionally, Beelink’s Mate SE expansion dock allows consumers to further increase storage capabilities, with the potential to add two more M.2 drives. When combined with the internal capacity, this could yield a total of 16TB of PCIe 4.0 SSD space, positioning the SER9 Pro as an excellent option for professionals in need of high-capacity storage solutions for demanding projects.

The SER9 Pro indicates a shift towards versatile, compact, and efficient computing solutions that cater to modern business needs and creative endeavors. Its integration of AI technology not only enhances the PC capabilities but also reflects a growing trend in the tech industry where AI becomes an essential collaborator in the workplace. The practical applications of the Beelink SER9 Pro’s voice kit for daily office tasks, combined with its robust performance, exemplify how mini PCs can adapt to the evolving demands of users.

In conclusion, the Beelink SER9 Pro is not just another mini PC on the market; it’s a comprehensive tool designed to support professionals and creators alike. Whether you are leveraging its AI voice kit in a bustling office or pushing the limits of its editing capabilities, the SER9 Pro is positioned to meet and exceed the expectations of a diverse range of users seeking a powerful and adaptable computing solution.

-

New Microsoft Edge AI Browser Copilot Mode : Designed to Simplify Your Life

The digital landscape is evolving rapidly, and browsers are no longer mere gateways to the web—they’re becoming intelligent assistants. With the introduction of Microsoft Edge’s Copilot Mode, users can now experience a browser that goes beyond traditional functions, transforming how we manage our online activities.

Imagine a browsing experience where your online assistant remembers your preferences, organizes your tasks clearly, and anticipates your needs all within your browsing window. This is precisely what Copilot Mode aims to achieve. Designed to facilitate productivity and improve user experience, this innovative suite of AI-powered tools redefines what we can expect from a web browser.

Kevin Stratvert highlights five key features in his overview, demonstrating how this mode enhances productivity and organization. The first notable capability is the multi-tab analysis. Gone are the days of frantically navigating multiple tabs; this feature simplifies the workflow by organizing and comparing open tabs, generating summaries that allow for better decision-making.

The second groundbreaking innovation is the “Journeys” feature, a function that categorizes your browsing history into structured cards. This organization enables users to easily revisit past sessions and build on their previous work, whether for academic research or travel planning. Such an intuitive tool greatly reduces the time spent searching through endless browsing histories.

Enhanced intelligent search is another remarkable addition. This feature adjusts to user intent, providing responses that range from quick answers to in-depth explanations tailored to individual needs. Users can expect faster and more accurate results, ensuring they can find exactly what they need without excessive searching.

Furthermore, real-time content interaction tools such as Quick Assist and Copilot Vision empower users to engage with complex information efficiently. Instant insights, comprehensive summaries, and sentiment analysis become readily accessible, allowing for a streamlined approach to processing information.

The true power of Copilot Mode lies in its AI-powered task automation capabilities. Users can command the browser to manage intricate processes directly, from grocery shopping and restaurant bookings to organizing emails and planning vacations. This seamless approach dramatically reduces the need to switch between several applications, consolidating tasks directly within the browser and saving crucial time and effort.

With increasing workloads and responsibilities, Copilot Mode serves as more than just a productivity hack; it becomes an indispensable ally in managing daily challenges. By taking on repetitive tasks, it frees up mental bandwidth so users can focus on what truly matters—whether that’s strategic planning, creative work, or simple relaxation.

Microsoft Edge’s Copilot Mode represents a significant leap forward in browser technology, introducing features that prioritize user experience and productivity. As businesses and individuals seek ways to optimize their workflows, these enhancements are poised to play a pivotal role. The interconnectedness of tools developed within the Copilot framework signifies a future where technology not only supports our tasks but improves how we approach them.

In conclusion, Microsoft Edge’s Copilot Mode is not merely about surfing the web more quickly; it’s about crafting a more intuitive and efficient digital experience. As we adapt to this technology, it is clear that the potential for improved productivity and enhanced organization is just the beginning. Be prepared for a future where your browser is not just a tool, but an integral partner in your everyday life.

-

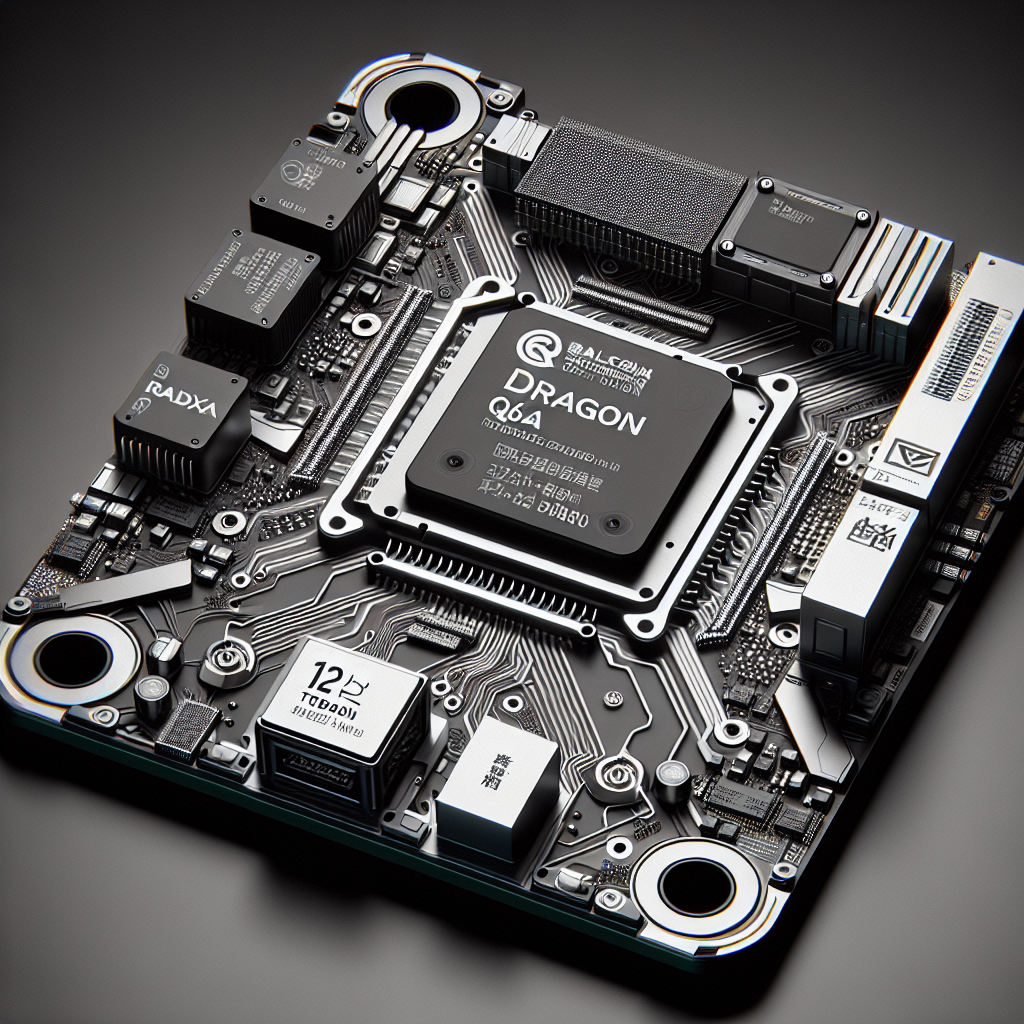

Radxa Rolls Out Dragon Q6A Featuring Qualcomm QCS6490, 12 TOPS NPU, and 6th-Gen AI Engine

Radxa has recently unveiled its latest innovation, the Dragon Q6A, a compact yet robust single-board computer designed to meet the demands of industrial, IoT, and edge computing environments. This powerful board leverages Qualcomm’s QCS6490 octa-core platform, promising a blend of high performance and versatility.

At the heart of the Dragon Q6A is an impressive octa-core Kryo CPU configuration, consisting of one Prime core clocking in at 2.7 GHz, three Gold cores at 2.4 GHz, and four Silver cores at 1.9 GHz. Complementing this CPU powerhouse is the Adreno 643 GPU, which supports a range of graphics APIs including Vulkan 1.3, OpenCL 2.2, OpenGL ES 3.2, and DirectX 12, making it suitable for various demanding applications.

One of the standout features of the Dragon Q6A is its Qualcomm 6th-generation AI Engine, which is equipped with a Hexagon DSP, Tensor Accelerator, and Coprocessor 2.0. This configuration enables the board to deliver an impressive 12 TOPS (Tera Operations Per Second) of AI compute performance while maintaining low power consumption, showcasing Radxa’s commitment to energy-efficient solutions in the AI space.

The flexibility offered by the Dragon Q6A extends beyond processing capabilities. It provides a diverse array of storage and expansion options, including an M.2 M-Key socket for NVMe SSDs, a microSD slot, and connectors for UFS/eMMC storage. This wide range of options allows users to choose the configuration that best suits their needs, with LPDDR5 RAM options ranging from 4 GB to 16 GB, operating at speeds of up to 5500 MT/s. The standard 40-pin GPIO header enhances the board’s compatibility with various peripherals, supporting interfaces such as UART, I²C, SPI, PWM, and more.

In terms of connectivity, the Dragon Q6A is equipped with state-of-the-art features, including integrated Wi-Fi 6 and Bluetooth 5.4, provided through a Quectel FCU760K module. Gigabit Ethernet connectivity is also standard, with optional Power over Ethernet (PoE) support. This extensive connectivity ensures that the Dragon Q6A can be integrated into a myriad of networked environments, further broadening its applicability.

The display capabilities of the Dragon Q6A are equally impressive. It includes HDMI 2.0 support for 4K video output at 30 Hz, alongside a MIPI DSI interface and multiple camera connections via MIPI CSI. The ability to connect up to four cameras allows for advanced visual systems and applications, which are essential in industrial automation and smart IoT deployments.

Software support for the Dragon Q6A is robust, offering compatibility with Radxa OS, various flavors of Linux including Ubuntu, Armbian, Arch, and Fedora, as well as Windows 11 IoT Enterprise. Developers are encouraged to leverage Qualcomm’s AI Hub, which provides pre-optimized on-device models and hardware-control libraries, simplifying the process of implementing AI capabilities in applications. Additionally, Radxa has made available a wealth of documentation through its Wiki, helping users navigate through the setup and programming of the Dragon Q6A.

In conclusion, the Radxa Dragon Q6A emerges as a significant player in the realm of single-board computers, particularly for applications that demand high performance, AI capabilities, and flexible connectivity options. Its unique combination of features not only fulfills present technological requirements but also anticipates future trends in edge computing and IoT applications.