-

Coinbase’s AI payments system joins Linux Foundation, gathers support from Google, Stripe, AWS and others

In a significant development for the intersection of finance and technology, Coinbase has announced that its AI-focused payment protocol, x402, will join the Linux Foundation. This initiative aims to develop an open, standardized infrastructure for high-frequency micro-transactions that legacy financial systems have struggled to efficiently manage. As the demand for innovative financial solutions surges, x402 is poised to address new challenges in digital commerce.

The x402 protocol is designed to enable agentic payments—transactions carried out autonomously by AI agents. This concept has gained traction particularly within the cryptocurrency sector, which sees programmable, blockchain-based micro-payments as the future of commerce. The unique approach of x402 resolves the limitations of traditional payment systems, allowing for transactions of mere fractions of a cent, executed at a rapid pace that even credit card networks find challenging.

By partnering with the Linux Foundation, Coinbase is setting the stage for a community-governed ecosystem that embraces transparency and interoperability. The x402 Foundation, which will oversee this initiative, already includes vital industry players such as Cloudflare and the widely recognized payments provider, Stripe. With the backing of major companies, this collaboration emphasizes the industry’s collective interest in pushing the boundaries of what’s possible in AI-driven transactions.

At the core of x402’s framework is the ambition to create an environment similar to what Secure Sockets Layer (SSL) technology achieved for internet security. The foundation aims to standardize AI commerce protocols that can enhance interoperability across different platforms and ensure secure transactions are executed efficiently. As Jim Zemlin, CEO of the Linux Foundation, articulated, the goal is to create an open, community-governed space that evolves with input from diverse stakeholders in the ecosystem.

The roster of supporters for the x402 Foundation showcases the widespread interest in this initiative. Companies spanning multiple industries are expressing intent to participate, including Adyen, Amazon Web Services, American Express, Google, and Visa, among many others. This diverse coalition suggests that x402 will cater to a wide range of applications and use cases, from e-commerce to advanced financial services.

“The shift toward agentic commerce requires cloud infrastructure that is as open as the protocols it supports,” stated James Tromans, Managing Director for Web3 and Digital Assets at Google Cloud. His commitment to interoperable standards encapsulates the movement towards seamless AI-driven transactions that can operate securely across various platforms.

As x402 unfolds, it holds the promise of transforming how micro-transactions are conducted, offering a scalable alternative to legacy systems that currently dominate the market. The push to implement open-source solutions underlines the growing recognition that collaboration can foster innovation, paving the way for a new era in financial transactions driven by AI technology.

In summary, the convergence of Coinbase’s x402 payment protocol with the Linux Foundation marks a pivotal step towards reshaping digital commerce. By fostering a collaborative environment among industry leaders, this initiative stands to enhance the efficiency and security of digital transactions, ultimately setting a higher standard in the financial technology landscape. The implications are far-reaching, with the potential to revolutionize the way we conduct business in a rapidly evolving digital world.

-

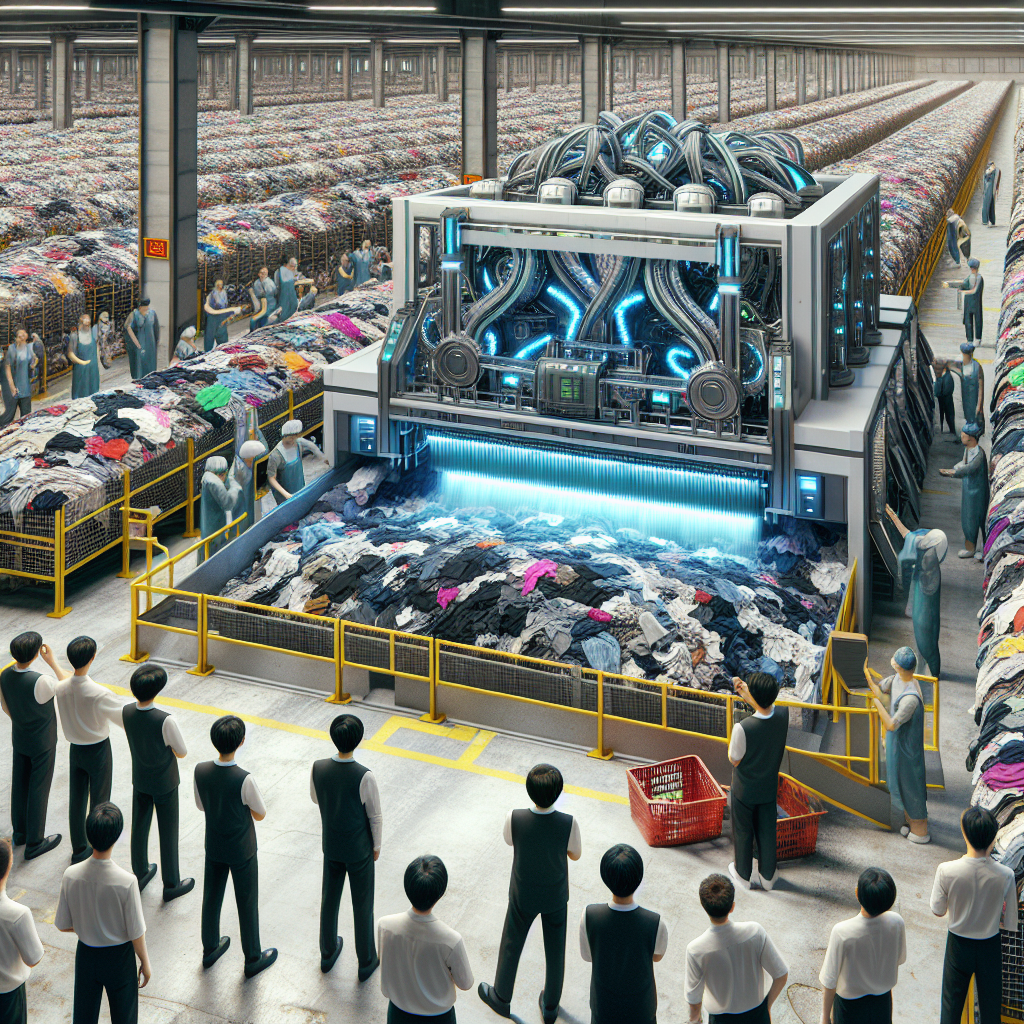

AI machine sorts clothes faster than humans to boost textile recycling in China

In a significant breakthrough for recycling technology, a company in eastern China has introduced an innovative AI-powered machine that sorts discarded clothing with remarkable speed and efficiency. Located in Zhangjiagang, a city in Jiangsu province, this cutting-edge machine, known as Fastsort-Textile, offers a glimpse into the future of textile recycling and the role artificial intelligence can play in addressing the pressing issue of synthetic textile waste.

Developed by DataBeyond, an artificial intelligence recycling company founded in 2018, Fastsort-Textile was recognized as one of Time magazine’s Best Inventions of 2025. The machine’s unique ability to analyze the composition of textiles enables it to sort materials much faster than human workers, revolutionizing the way textile waste is processed.

The textile industry has long been a significant contributor to environmental pollution, with synthetic textiles made from fossil fuels accounting for roughly 70% of global production. Given this context, the Fastsort-Textile machine aims to reduce the volume of textile waste that ends up incinerated, thus promoting recycling and resource efficiency. Mo Zhuoya, the CEO of DataBeyond, emphasizes the importance of this technology in making full use of textile waste and mitigating its harmful environmental effects.

The current use of the Fastsort-Textile machine is exclusive to Shanhesheng Environmental Technology Ltd., a textile recycling facility in Zhangjiagang that installed the machine in 2025. This facility has rapidly turned into a hub for innovative recycling practices, setting a standard for others to follow. By employing the Fastsort-Textile machine, the facility has been able to enhance productivity significantly.

Statistics reveal the efficiency of this machine. Fastsort-Textile processes an impressive 100 kilograms (220 pounds) of clothing in just two to three minutes—an accomplishment that would typically take a human worker around four hours. Furthermore, the machine boasts a processing capacity of two tons per hour, whereas a team of two workers would require nearly two days for the same amount, highlighting both the speed and accuracy of the AI-driven technology.

The operational mechanics of the Fastsort-Textile involve an AI scanner that scans and analyzes the fabric composition, which is supported by a network of conveyor belts. Textile stacks are loaded onto these belts, allowing them to pass through the scanner that sorts the fibers automatically. This smart process not only boosts productivity but also enhances the accuracy of sorting, enabling higher quality recycling outcomes.

The implications of this technology extend beyond mere efficiency; it symbolizes a crucial step towards sustainable practices in the fast-fashion industry. As China leads the globe in textile exports—reaching a staggering $142 billion, which is more than double that of the European Union—the adoption of such recycling technologies becomes essential in combating pollution associated with clothing production and disposal.

Despite the evident advantages offered by the Fastsort-Textile machine, challenges remain in scaling such solutions across the textile recycling industry. Currently, only one facility in China employs this technology, and broader adoption will require investment and partnership across sectors to facilitate the infrastructure necessary for widespread use. As demand for sustainable practices increases, the potential for this or similar technologies to become prevalent is promising.

As companies and investors begin to recognize the importance of sustainable practices, the Fastsort-Textile machine stands as an example of how innovation can foster change in traditional industries. It showcases the capability of artificial intelligence to address complex environmental issues while providing tangible benefits for business leaders looking to invest in responsible, future-oriented technologies.

-

Schneider Electric Deploys AI Across 100 Use Cases in Industrial Operations

Schneider Electric has taken a significant leap forward in the integration of artificial intelligence within its industrial operations. By actively deploying nearly 100 distinct AI use cases into production, the company is setting a new standard for effective, large-scale AI implementation. Unlike many organizations that struggle with projects stuck in pilot phases, Schneider Electric ensures its initiatives demonstrate clear business value from the outset.

Every year, Schneider Electric processes approximately 7.5 million customer service tickets through automated systems. This process enhancement indicates a profound shift in how AI is impacting heavy industries, allowing for repetitive tasks to be handled by intelligent systems. Previously, employees would analyze and route incoming requests manually; now, AI takes care of this, enabling staff to dedicate their efforts to higher-value interactions with customers.

Philippe Rambach, the Chief AI Officer at Schneider Electric, emphasizes the importance of aligning AI projects with real business and customer needs. By focusing on employee pain points and where AI can provide tangible support, Schneider Electric ensures that each initiative is designed for scalability. This strategic approach, initiated with the establishment of an AI Hub in late 2021, has allowed the company to avoid the fate of many industry peers whose pilot projects never see the light of day.

The journey from concept to operational AI is illustrated by a compliance tool developed to guide marketing teams through European anti-greenwashing regulations. Initially launched as a standalone application, user adoption remained static until it was integrated into existing workflows, such as the document management system that employees use daily. This example highlights a crucial aspect of AI integration: when AI lives outside of established workflows, it struggles for attention. Conversely, AI embedded within existing processes becomes an invisible yet critical infrastructure component.

Rambach further explains that the majority of successful AI transformation—between 80% and 90%—relates to managing change, providing training, and redesigning workflows rather than the technology itself. To address this, Schneider Electric has implemented mandatory AI fundamentals training for its workforce of 140,000 employees. This training is recorded and tracked similarly to compliance requirements, ensuring employees understand AI’s capabilities and can effectively utilize it within their roles.

Beyond customer service enhancements, the operational impact of AI extends into predictive maintenance and supply chain economics. By integrating intelligent forecasting systems, Schneider Electric optimizes its inventory management solutions, significantly improving efficiency and reducing costs. The predictive capabilities enable the company to proactively address equipment failures before they occur, thus minimizing downtime and operational disruption. This shift towards predictive maintenance results in enhanced reliability and ultimately supports higher levels of customer satisfaction.

As AI continues to evolve, Schneider Electric’s commitment to embedding these technologies into every layer of its operations sets a benchmark for the industrial sector. By securing tangible results and maintaining a strong focus on change management, the organization showcases how AI can redefine operational paradigms. It exemplifies a model where technology seamlessly becomes part of everyday processes, ensuring that both employees and customers benefit from smarter, more efficient systems.

Ultimately, Schneider Electric serves as a leading example of how businesses can successfully transition AI from theoretical applications to practical tools that drive substantial operational improvements. As industries begin to take notice of these advancements, the importance of embracing AI as a core business strategy becomes increasingly evident.

-

Rules of attraction: Auckland’s AI-savvy Dexibit sells to UK-listed firm for $21m

The recent acquisition of Auckland-based Dexibit by UK-listed Accesso marks a significant milestone in the realm of artificial intelligence and business resilience. With a sale price of US$12 million ($21 million), this transaction emphasizes how technology for cultural and entertainment institutions is evolving while highlighting the challenges faced during the pandemic.

Dexibit, a company that emerged from the shadows of a tough pandemic, has showcased remarkable adaptability. Originally focused on museums, the company has expanded its offerings to encompass stadiums, theme parks, and various commercial attractions. This evolution positions Dexibit not just as a provider of analytics for these institutions but also as a vital partner in their recovery and growth strategies post-lockdown.

Accesso, with a market capitalization of £84 million ($194 million) and operations spanning nine countries, has found valuable crossover synergy with Dexibit. Offering services from point-of-sale systems to ticketing and safety waivers, Accesso serves diverse operators, including snowboarding facilities. This breadth indicates that the acquisition is not just a merger; it represents a strategic alignment of shared goals in enhancing visitor experiences across attractions.

Leadership within Dexibit remains intact following the acquisition, with Judge transitioning to a senior vice president role within Accesso Intelligence, which encompasses Dexibit’s operations. This leadership continuity is crucial for leveraging the existing strength and expertise that Dexibit has built, ensuring that their innovative spirit continues to thrive under the Accesso umbrella. Other key team members will also retain their positions, reinforcing a sense of stability during this transition period.

Financially, the deal was structured to offer threefold benefits. The upfront cash payment of US$7.1 million sets a strong basis, while the additional US$5 million earnout reflects confidence in Dexibit’s growth potential, contingent on meeting predefined milestones. This not only incentivizes Dexibit to reach new heights but also confirms investors’ faith in the company’s strategic direction.

Dexibit’s journey illustrates not just the power of innovation but the vital role of resilience in the face of adversity. As noted by Icehouse Ventures CEO Robbie Paul, who played an integral part in supporting Dexibit through initial investment rounds, the company’s passion and determination were palpable even during the turbulent Covid lockdowns. This authenticity not only captured investor attention but also established Dexibit as a standout among start-ups, ultimately leading to its visibility in the global market.

One of the striking components of this deal is the story of progression, from the humble beginnings of Dexibit to landing prestigious clients such as the Smithsonian and MoMA. The journey reflects a keen understanding of the cultural sector’s needs and the technology required to meet them. The narrative of empowering cultural institutions fosters a deep connection with investors, customers, and partners alike, creating a community bound by shared goals.

With this acquisition, the potential for enhancing visitor experiences at various cultural and entertainment venues is boundless. Accesso’s established suite of services combined with Dexibit’s robust data analytics capabilities could revolutionize how attractions understand and cater to their visitors. This synergy presents an enticing prospect for business leaders in the cultural sector, as visitor insights lead to more tailored experiences and efficient operations.

As this transaction unfolds, many will watch keenly how Dexibit integrates and transforms under Accesso’s strategic vision. The anticipation of growth, innovation, and the potential to set new standards in the industry illustrate the dynamic evolution of technology in enhancing visitor engagement. This acquisition is not just a financial event; it symbolizes the intersection of technology, culture, and community resilience in a post-pandemic era.

The pathway ahead seems promising as Dexibit becomes part of a larger ecosystem focused on delivering enhanced value to cultural institutions worldwide. The commitment to providing powerful insights and analytics can drive innovation and foster community engagement across various platforms, ultimately leading to better experiences for visitors.

-

Visa and Ramp Develop AI Agents for Corporate Bill Pay

In a significant development for the world of corporate finance, Visa and Ramp have unveiled a pioneering initiative that incorporates artificial intelligence (AI) agents into the realm of corporate bill payments. This innovative collaboration aims to automate the bill payment process, minimizing manual work, curbing unnecessary expenditure, and unlocking avenues for substantial savings.

The launch of these AI agents was officially announced in a press release on March 31, 2026, showcasing a forward-thinking approach that is set to provide Ramp’s customers with enhanced payment flexibility and improved control over their corporate expenditures. This initiative is part of a broader partnership between Visa and Ramp, which includes a renewed multi-year issuing agreement and deeper integration of technology, positioning Ramp as a key player for targeted corporate service use cases withinVisa’s expansive network.

Colin Kennedy, Chief Business Officer at Ramp, emphasized that the best financial systems incorporate controls inherently, rather than retrofitting them post-transaction. This philosophy aligns perfectly with Visa’s vision of streamlining commerce while enhancing security and simplicity. The current partnership seeks to further this vision by integrating Ramp’s advanced financial operations platform — covering everything from corporate cards to automated bookkeeping and expense management — with Visa’s global payments infrastructure.

The momentum of Ramp’s offerings is evident in its impressive growth trajectory, having seen its enterprise customer base expand by 133% year on year in 2025. This surge underscores a marked shift in the market as enterprises are increasingly seeking solutions that replace outdated financial infrastructures characterized by manual controls and disconnected systems. Visa’s solutions, as noted by Chris Newkirk, the president of commercial and money movement solutions, aspire to diminish friction in payment processes rather than exacerbate it. The automation and real-time control aspects of Ramp’s offering are in direct alignment with these objectives.

Particularly noteworthy is Ramp’s strategy to deploy AI agents that are designed to enforce company expense policies automatically, block unauthorized spending, and mitigate the risk of fraud. This initiative was notably first rolled out in July when Ramp introduced its initial AI agents, demonstrating its commitment to leveraging advanced technology to redefine corporate financial operations. Following this, the company took further strides in October by adding AI agents specifically designed for invoice processing, showcasing its dedication to continuous enhancement and innovation.

Moreover, Visa has also been working on establishing a Trusted Agent Protocol in partnership with Worldpay and Cloudflare. This protocol aims to address pre-existing challenges in agent-driven commerce and facilitate secure communication between merchants and AI agents, thereby alleviating some of the key obstacles encountered during the shopping process on behalf of consumers. Such technological advancements are indicative of a broader trend towards integrating AI into commerce, with the ultimate goal of ensuring security and operational efficiency.

This initiative not only represents a significant step forward for Visa and Ramp but also sets a notable precedent for other companies looking to enhance their financial processes through automation. As businesses increasingly seek tools to alleviate the burdens of manual operations and improve financial oversight, the implications of AI-driven solutions are profound.

In conclusion, the partnership between Visa and Ramp exemplifies how the fusion of AI technology and financial services can create powerful tools for corporations aiming to streamline their payment processes and secure their financial operations. As businesses navigate the complexities of financial management, such innovations will be crucial in maintaining competitive advantages and adapting to the evolving marketplace.

-

FactSet Accelerates Innovation in Banking with Launch of a New AI-Native Solution in Partnership with Finster AI

In the ever-evolving landscape of finance, the need for innovation and efficiency has never been more pronounced. On March 30, 2026, FactSet, a prominent global financial digital platform, unveiled the alpha launch of its latest offering: FactSet AI for Banking. Developed in collaboration with Finster AI, this groundbreaking AI-powered workflow automation ecosystem is designed specifically for investment banking teams and research and sell-side firms, marking a significant advancement in financial technology.

FactSet AI promises to revolutionize traditional banking processes by providing a unified, secure environment that automates complex deal workflows. This initiative underscores FactSet’s commitment to enhancing the efficiency of banking operations and unlocks new data-driven insights that are crucial for strategic decision-making. As stated by Kate Stepp, FactSet’s Chief AI Officer, the goal is to create a seamless integration of powerful intelligence and AI agents that enhance user experience while ensuring trust in core systems. The aim is to empower clients in their journey from insight to action with unprecedented speed and reliability.

What sets FactSet AI for Banking apart is its intelligent design, which positions it at the crossroads of established market data providers and the emerging landscape of standalone AI solutions. Unlike conventional tools that often require users to toggle between disparate platforms, FactSet’s solution offers a streamlined, purpose-built workflow. This is especially critical in regulated environments where data security and compliance are paramount. The deployment of natural language prompts facilitates the orchestration of essential transaction assets and complex processes throughout the deal lifecycle, providing users with full traceability and accountability.

Furthermore, the incorporation of multiple agents within this AI solution is a game-changer for investment bankers. By automating the proactive, trigger-based generation of vital materials—such as pitch presentations, company profiles, detailed memos, and in-depth research—the platform allows bankers to redirect their focus towards higher-value tasks. This shift not only enhances productivity but also enables banking professionals to deliver differentiated insights and foster deeper client relationships.

Kris Karnovsky, Executive Vice President at FactSet, emphasized the significance of this advancement, stating that it reflects the company’s unwavering commitment to addressing the daily challenges faced by bankers. With the integration of intelligent automation and broad user experience interoperability, FactSet AI stands to drastically revolutionize the way investment banking teams operate. This technological leap forward will have profound implications for how financial institutions engage with clients and handle transactions.

The investment in Finster AI by FactSet further cements the strategic partnership between the two companies and highlights the importance of innovation in remaining competitive within the industry. The collaboration is poised to foster continued advancements in next-generation financial solutions, creating a platform that not only meets present demands but also anticipates future challenges.

In summary, the launch of FactSet AI for Banking signifies a pivotal moment in the financial sector, where automation and AI are no longer mere enhancements but foundational elements of operational strategy. As FactSet continues to push the boundaries of what is possible in investment banking, the expectation is that clients will feel the impact of this innovation in their daily operations. With a focus on building a more efficient, insightful, and connected banking environment, FactSet is poised to set new standards in the financial landscape.

-

How Tavily reduced AI search caching costs by 95% with Amazon S3 Express One Zone | Amazon Web Services

Tavily, a leading AI infrastructure company, is making strides in enhancing the efficiency and cost-effectiveness of AI search technologies. Their innovative approach focuses on building a web access layer specifically designed for agents and large language models (LLMs). By providing developer-friendly APIs, Tavily enables real-time, structured information retrieval from the web, making access to crucial data instantaneous for intelligent systems. Their mission resonates with thousands of top-tier research teams and enterprises globally, wherein their platform incorporates AI-powered Search, Extract, Map, and Crawl APIs to furnish structured web content promptly.

The AI search engines that fuel autonomous agents face demanding performance challenges that can significantly impact user experience. For these systems, maintaining single-digit millisecond response times is imperative to ensure fluid conversations and enable making prompt decisions. Additionally, they need to manage unpredictable traffic bursts while achieving elastic scalability, all without escalating operational costs. Tavily’s AI search engine, built to specifically support autonomous agents, was grappling with substantial issues in its existing document database caching layer as it continued to grow.

As Tavily’s user base expanded and workloads increased, the complications of managing their caching layer profoundly affected both cost and performance. The original document caching system, developed for human-centric applications, was misaligned with the high demand for low-latency responses required by AI agents. With latency spikes disrupting agent interactions and a manual capacity planning process consuming crucial engineering resources, Tavily was compelled to seek a more effective solution.

This scenario prompted Tavily to explore migrating their caching architecture to Amazon S3 Express One Zone, an innovative storage solution designed to offer a cost-effective, scalable alternative. As they detailed in a recent blog post, this shift resulted in remarkable performance enhancements alongside a significant reduction in operational costs—up to 95% less for caching. The migration not only streamlined costs but also ensured that Tavily’s system could consistently meet the critical latency benchmarks of 10 milliseconds.

The notable transition highlighted the limitations of the traditional document database model for AI workloads. Unpredictable latency had become a persistent adversary, as the initial caching layer struggled to deliver the consistent performance required for real-time data delivery. For autonomous AI agents, even slight delays in response time are detrimental; thus, Tavily needed a reliable solution to eliminate variability.

By choosing to employ Amazon S3 Express One Zone, Tavily was able to craft a caching system that bridged the gap between cost efficiency and high-performance requirements. Their solution involved a thorough analysis of their existing architecture challenges that led them to make the significant leap towards a more streamlined, performance-driven caching mechanism. This transformation hinged on methodical planning, evaluation, and implementation, which they outline comprehensively, providing invaluable insight for other organizations tackling similar hurdles.

The implications of Tavily’s successful migration are far-reaching, particularly as enterprises increasingly adapt to demands for sophisticated AI solutions and scalable infrastructures. Their experience can serve as a practical guide for businesses looking to optimize their operations in a landscape where effective caching and retrieval directly impact the performance of AI agents.

In conclusion, Tavily’s ambitious move towards Amazon S3 Express One Zone showcases a strong commitment to innovation and operational excellence in the AI domain. Their ability to significantly cut costs while enhancing performance stands as a testament to the potential of modern cloud solutions, offering an inspiring model for other organizations aiming to harness the full capabilities of AI.

-

‘A high-speed digital cheat sheet’: Google unveils TurboQuant AI-compression algorithm, which it claims can hugely reduce LLM memory usage

Google recently unveiled its latest innovation in artificial intelligence, the TurboQuant algorithm, designed to significantly reduce memory usage for large language models (LLMs) while maintaining accuracy across demanding workloads. As industries increasingly rely on AI tools for large-scale processing, efficient memory management becomes crucial, and TurboQuant aims to address this critical challenge.

The memory strain associated with traditional LLMs has long been a bottleneck in AI system performance. Central to this issue is the key-value cache, which serves as a ‘high-speed digital cheat sheet’ for the models, allowing for rapid reuse of data in computations. While this mechanism enhances the responsiveness of AI systems, it also places considerable demands on memory resources, particularly as models continue to scale.

Right now, as LLMs grow in complexity and size, managing memory efficiently without sacrificing speed or accessibility presents a significant hurdle. Current methods such as quantization, which reduces numerical precision to compress data, carry drawbacks of their own, often resulting in reduced output quality or adding overhead due to stored constants. Google’s TurboQuant overcomes these longstanding limitations by introducing a two-stage compression process.

The initial stage, named PolarQuant, transforms vectors from standard Cartesian coordinates into polar representations. By doing so, it condenses information into more compact forms, using radius and angle values rather than multiple directional components. This approach reduces the need for repeated normalization steps, which are typically resource-intensive in conventional quantization methods.

Next in the process is the Quantized Johnson-Lindenstrauss (QJL) stage. While PolarQuant compresses the bulk of data, minor residual errors can occur. QJL acts as a corrective layer that reduces each vector element to a single bit, which can either be positive or negative, all while preserving crucial relationships among data points. This additional refining step enhances attention scores, which play a vital role in determining how models prioritize information during their processing tasks.

According to Google’s reported testing results, TurboQuant has already achieved substantial efficiency gains across various long-context benchmarks when evaluated against open models. Impressively, it has been shown to reduce the memory footprint required for key-value cache by a factor of six, all without affecting the consistency of downstream results.

Additionally, TurboQuant facilitates quantization to as low as three bits, a remarkable feat considering the absence of retraining requirements. This compatibility with existing model architectures means that businesses can implement TurboQuant without the need for extensive modifications to their current systems.

Such advancements in AI technology indicate not just incremental improvements but transformative changes that can enhance how businesses utilize AI tools. As TurboQuant improves the efficiency of memory usage, it opens the door for more powerful and responsive AI applications, driving innovation across various sectors.

In conclusion, Google’s TurboQuant presents a groundbreaking solution aimed at tackling memory challenges faced by large language models. By optimizing how data is stored and processed, Google is paving the way for more scalable and efficient AI systems that can better meet the demands of modern applications. As companies continue to embrace AI, innovations like TurboQuant hold the potential to significantly impact productivity and performance in the tech landscape.

-

India’s sovereign AI models find early takers among healthcare, education institutions

India is witnessing a surge in demand for sovereign AI models, particularly from the healthcare and education sectors. This early enthusiasm serves as a substantial validation for the country’s endeavor to develop AI technologies uniquely suited to its needs. Institutions both within India and abroad are expressing keen interest in these AI solutions that promise to deliver region-specific benefits.

Since the inception of the India AI Mission, a number of tech firms have announced their visions for sovereign AI products tailored to the Indian landscape. Notably, companies like Fractal Analytics and Tech Mahindra are spearheading this movement, planning to finalize their AI offerings by 2026. These initiatives are evidently resonating well, especially among hospitals and educational establishments eager for customized applications that align with local contexts.

Nikhil Malhotra, the Chief Innovation Officer and Global Head of AI & Emerging Tech at Tech Mahindra, elaborated on ongoing discussions the company is having across various regions, including Eastern Europe and Southeast Asia. The focus in these dialogues is to create localized and culturally relevant AI models that cater to distinct linguistic and regulatory frameworks of each country.

In India, Tech Mahindra’s foundational AI model has emerged as one of the most downloaded options available, reflecting a burgeoning interest from developers and the wider ecosystem. Once the model achieves production readiness, plans are in place to integrate it into both state and central government frameworks systematically.

Moreover, feedback on an education-focused language model (LLM) being developed in collaboration with various educational partners has been positive. Malhotra believes stakeholders appreciate the model’s localized methodology, its linguistic attributes, and how it aligns with national educational priorities. The model is reportedly in the advanced stages of refinement, suggesting that a substantial commitment is being made to ensure its effectiveness and utility.

On the healthcare front, Fractal Analytics has also reported encouraging responses to their Vaidya 2.0 models. According to Suraj Amonkar, the Chief AI Research Officer at Fractal, these models are engineered to integrate health chatbot functionalities and tackle diverse use cases ranging from report analysis to symptom triaging and overall wellness. This multifaceted approach illustrates the intent to build a robust AI framework that can address both general and specialized healthcare needs.

Despite this promising interest from healthcare and educational sectors, there remains a critical gap in responses from enterprise-level clients regarding sovereign AI solutions. Anushree Verma, Senior Director Analyst at Gartner, noted that adopting a sovereign AI model within an enterprise isn’t straightforward. She highlighted challenges like ensuring a strong customer experience and accommodating multilingual requirements which may necessitate more nuanced solutions than those currently available.

The growing traction of these sovereign AI models indicates a clear path forward for India in the realm of artificial intelligence development. As the nation strives to carve out its identity in the global AI landscape, the focus on localized solutions could not only meet regional needs but potentially transform how businesses leverage technology to enhance operational efficiencies and improve service delivery.

As these sovereign AI projects progress, the broader implications for businesses are becoming increasingly apparent. The practical applications of these technologies in casual settings could pave the way for enhanced customer engagement, targeted solutions, and improved user experiences, which are essential for fostering a competitive edge in various sectors.

In conclusion, the early interest in sovereign AI models from healthcare and educational institutions suggests a phase of significant technological advancement in India. The commitment of key companies to develop these customized models reflects an ongoing transformation that could position India as a leading player in the global AI arena by aligning technological capabilities with local needs.

-

‘Nuclear energy is the essential backbone for this future, but the industry remains trapped in a delivery bottleneck’: Microsoft and Nvidia team up to tackle ‘historic surge in power demand’ by using AI to help the nuclear power industry

The collaboration between Microsoft and Nvidia represents a pivotal move in addressing the extensive delays faced by the nuclear power industry. As the global energy sector grapples with an unprecedented surge in power demand, the capabilities of AI emerge as a critical solution to streamline processes and enhance efficiency.

At the crux of the issue lies the complexity of nuclear power project execution. Customized engineering requirements, along with fragmented data sets, create challenges that inhibit timely deliveries. Engineers currently invest thousands of hours in meticulously drafting and reviewing vast documentation, making these projects vulnerable to inefficiencies that can lead to costly overruns.

By leveraging AI tools, Microsoft and Nvidia aim to tackle these delivery bottlenecks effectively. High-fidelity digital twins and AI-driven simulations will enable engineers to validate designs in a virtual environment, allowing for the reuse of proven engineering patterns. This proactive approach not only expedites project timelines but also ensures that inconsistencies are detected at an early planning stage.

Generative AI also plays a transformative role in automating critical documentation workflows. The time and resources spent on drafting, formatting, and cross-referencing can now shift towards evaluating safety measures and enhancing operational standards. According to Microsoft, this transition is vital in creating an infrastructure capable of meeting modern demands—a vision that emphasizes the need for reliability in nuclear energy as a backbone for power solutions.

“The world is racing to meet a historic surge in power demand with an infrastructure pipeline built for the analog age… Nuclear energy is the essential backbone for this future,” a Microsoft spokesperson asserted in a recent blog post. This sentiment captures the urgency and importance of innovation within the sector, setting a clear direction for future advancements.

Moreover, once nuclear plants are operational, the integration of AI-powered sensors and digital twins will continue to enhance performance monitoring. These technologies provide real-time visibility, enabling predictive maintenance and ensuring human operators can make informed decisions grounded in continuous data analysis. By linking design assumptions to operational performance, AI further fosters transparency among stakeholders, establishing a predictable environment that reduces overall risk.

Encouragingly, preliminary applications of these innovative tools by Southern Nuclear and the Idaho National Laboratory have illustrated their potential. By applying AI to streamline engineering and safety analysis reports, these organizations have achieved improved consistency and enhanced decision-making speed—a trend that could significantly reshape industry practices.

Ultimately, the collaboration between Microsoft and Nvidia signifies a critical step towards modernizing the nuclear power sector. As AI tackles the inherent complexities and inefficiencies current processes face, the industry can potentially overcome the delivery bottlenecks that have long hindered growth.

These advancements not only highlight the profound impact of AI within the energy sector but also point towards a future where nuclear power can play its rightful role in meeting the world’s escalating energy demands. As the drive for sustainable and reliable energy sources accelerates, innovations like these could serve as a model for revamping industries beyond nuclear energy.