-

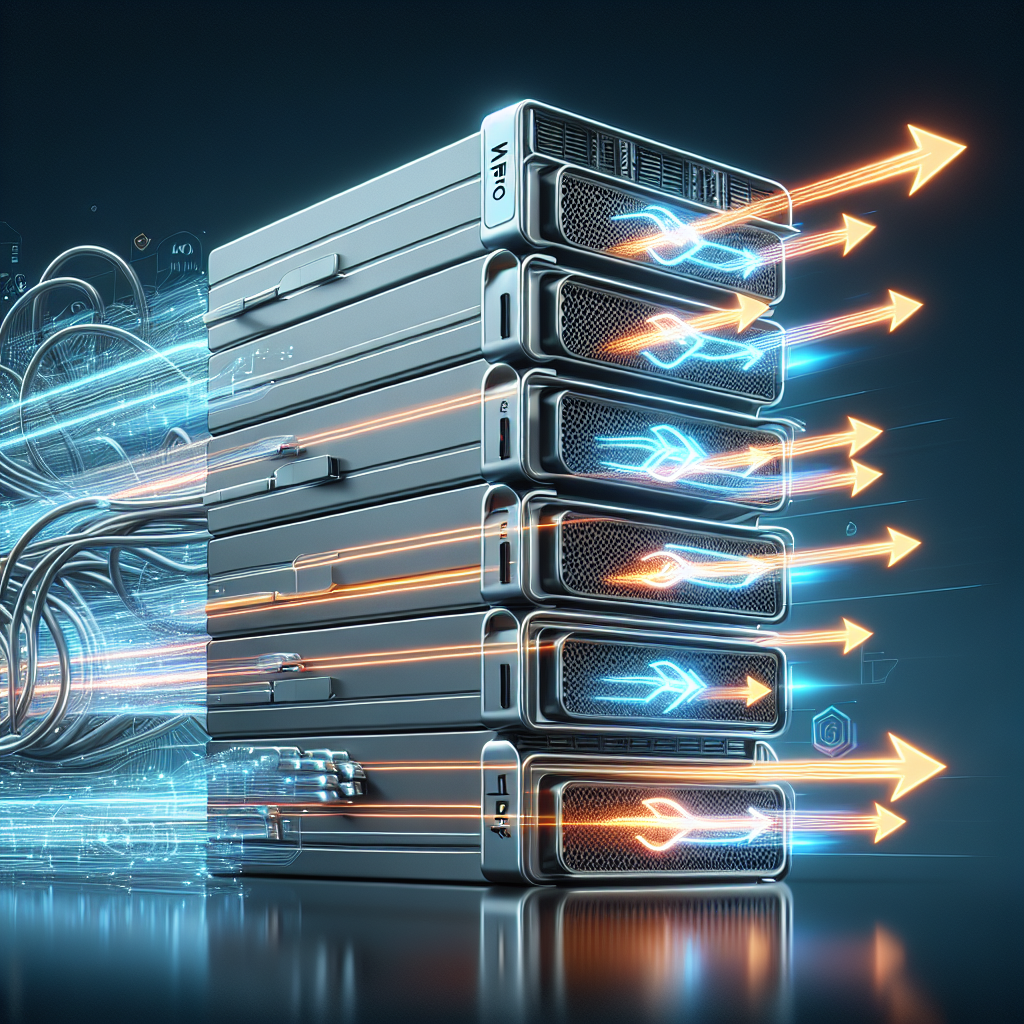

M4 Pro Macs Stack : Thunderbolt 5 Links Make Mac AI Go Way Faster

In a groundbreaking development, Apple has introduced significant advancements in artificial intelligence capabilities with the release of Exo 1.0, macOS 26.2, and RDMA over Thunderbolt 5. This innovation allows users to run trillion-parameter AI models on their desktops, dramatically reducing the need for expensive cloud infrastructure and paving the way for more efficient, accessible AI workflows.

The implications of these technological advancements are profound, especially for developers, researchers, and business leaders alike. The ability to cluster multiple Mac Studios or Mac Minis creates the potential for immense machine learning tasks to be handled with unprecedented ease. What was once the domain of specialized servers and extensive data centers is now being brought to the everyday user, democratizing access to powerful AI tools.

Unpacking Apple’s AI Advancements

At the core of these advancements is Exo 1.0, which streamlines distributed machine learning like never before. With a simple installation process and an intuitive interface, Exo enables real-time performance monitoring and incorporates tensor parallelism for efficient model sharding across multiple devices. This means that developers can now optimize their models across a cluster of Macs without getting bogged down by traditional bottlenecks associated with high-complexity tasks.

Additionally, the introduction of RDMA (Remote Direct Memory Access) over Thunderbolt 5 delivers impressive data transfer speeds—up to 10 times faster than previous generations. This feature eradicates the hindrances that have historically slowed down AI workloads, allowing for seamless scaling when utilizing devices equipped with M4 Pro chips or above.

The MLX Distributed Framework

Complementing these features is the MLX Distributed Framework, which enhances AI performance on Apple Silicon. This framework is designed to support both dense and quantized models, making it versatile for various applications, ranging from high-precision tasks to those that function efficiently in resource-constrained environments. This adaptability opens new avenues for businesses looking to leverage AI without the requirement for extensive investments in specialized hardware.

Scalability and Accessibility in Focus

With macOS 26.2 and its focus on unified memory architecture, Apple significantly improves scalability and accessibility for developers. This shift allows for cost-effective local AI workflows, facilitating innovation without necessitating a reliance on cloud services that can introduce latency and increase operational costs. Apple’s ecosystem is rapidly evolving into a robust platform where advanced machine learning models can thrive right from users’ desktops.

As companies seek to harness AI’s power for a competitive edge, these technological breakthroughs position Apple at the forefront of the conversation. Imagine the corporate possibilities—businesses can reduce overhead costs tied to cloud computing while still engaging in complex AI-related projects. Researchers now have the freedom to experiment and innovate without being tethered to external servers.

Final Thoughts: A New Era in AI

Apple’s initiatives in AI technology are not just technical upgrades; they signify a major shift in how businesses and individuals can approach machine learning. By making powerful AI accessible on local hardware, Apple is not just enhancing the capabilities of its devices—it’s reshaping the broader landscape of artificial intelligence. The advent of tools like Exo 1.0 and enhanced data transfer technologies could redefine what is possible in AI development, ensuring faster, more capable, and more affordable access to advanced technology. As we stand on the cusp of this new era, the question remains: how will you leverage these innovations in your work?

-

ICORE-3576Q38 SoM packs RK3576 AI processor into a 38 mm module

The ICORE-3576Q38 stands as an impressive innovation in the realm of embedded systems, combining compact size with powerful processing capabilities. Developed by T-Firefly, this system-on-module (SoM) is designed for applications where space constraints are critical while still delivering robust multi-core performance and local artificial intelligence (AI) acceleration.

This module measures just 38 mm × 38 mm, making it a practical solution for embedded and industrial designs. It utilizes the Rockchip RK3576 application processor, specifically tailored for environments demanding high-performance computation in a small footprint. The compact design does not compromise its capabilities; it offers a significant degree of multimedia and I/O support.

The ICORE-3576Q38 family is comprised of three distinct variants: the standard ICORE-3576Q38 for general commercial use and two industrial versions, the ICORE-3576JQ38 and ICORE-3576MQ38. While all models share a unified architecture, their specifications diverge slightly, offering different CPU frequencies and operational temperature ranges to suit various application needs.

The standard model excels with CPU frequencies reaching up to 2.2 GHz, achieved through a quad Cortex-A72 and quad Cortex-A53 configuration. In contrast, the industrial variants are limited to a maximum frequency of 1.6 GHz, which ensures stability and reliable performance across broader temperature ranges—an essential feature for machinery operating in harsh conditions.

One of the standout features of the ICORE-3576Q38 is its integrated neural processing unit (NPU) capable of delivering up to 6 trillion operations per second (TOPS) in inference performance. This serves a critical function in AI applications, supporting various data types such as INT4, INT8, INT16, FP16, BF16, and TF32. The NPU’s capability to operate in both collaborative and independent modes offers designers flexibility in deploying AI functionalities based on their specific requirements.

Memory and storage options for the ICORE-3576Q38 are also highly adaptable. Users can select between LPDDR4 or LPDDR4X configurations ranging from 4 GB to 16 GB, with eMMC storage options spanning from 16 GB to a robust 256 GB. Additionally, optional UFS 2.0 support can be implemented for applications demanding higher storage bandwidth, thus enhancing data throughput for critical applications.

Connectivity and expansion capabilities are cemented through flexible PCIe interfaces. One particular PCIe 2.1 interface can be configured for various functions, including SATA 3.1, PCIe, or USB 3.2 Gen1, while a second PCIe interface remains dedicated to PCIe or SATA 3.1 expansions. This level of customization is vital for businesses looking to integrate diverse peripherals or expand functionalities on the fly.

Furthermore, the module accommodates advanced display outputs, supporting HDMI 2.1 or eDP 1.3 with resolutions reaching 4096 × 2160 at 120 Hz, enhanced by DisplayPort 1.4 capabilities. The diverse camera input options through MIPI CSI interfaces enable robust image capture capabilities, suitable for various industrial applications.

In terms of multimedia performance, the ICORE-3576Q38 offers hardware acceleration for decoding VP9, AVS2, and AV1 video up to 8K at 30 frames per second or 4K at 120 frames per second. This functionality extends to H.264 standards, supporting both encoding and decoding at impressive frame rates and high resolutions. Such features ensure that the SoM can handle demanding video applications effectively.

Software support for the ICORE-3576Q38 is extensive, including Android 14, various Linux distributions, Buildroot, and real-time Linux configurations, all accessible through T-Firefly’s dedicated wiki. This broad compatibility allows businesses to deploy software solutions suited to their operational needs more efficiently.

In summary, the ICORE-3576Q38 system-on-module signifies a notable advancement in embedded technology, bringing together compact design, powerful processing, and extensive support for AI and multimedia applications. Its capabilities enable businesses across various sectors to harness the power of AI directly at the edge, streamlining operations and enhancing product offerings.

-

Ex-Nvidia billionaire unveils new AI chips after China IPO debut

A groundbreaking development from the world of artificial intelligence has emerged as a former billionaire from Nvidia introduces a new series of AI chips, promising to revolutionize data processing and machine learning capabilities. This exciting announcement follows the company’s recent public offering in China, marking a pivotal moment in the competitive landscape of AI technology.

The newly unveiled technology sets its sights on mass production scheduled for 2026, with ambitious plans to enable clusters of more than 100,000 chips. This will significantly enhance AI training operations within data centers, providing a powerful infrastructure for both existing and emerging applications.

Current trends in artificial intelligence emphasize the need for robust hardware capable of managing vast amounts of data, particularly as AI applications such as natural language processing and computer vision continue to expand. The new chips are designed specifically to address these resource-intensive tasks, showcasing a blend of innovative engineering and advanced design tailored for the specific demands of AI workloads.

As the AI landscape evolves, companies are striving to reduce latency while improving efficiency and throughput. The introduction of these chips could offer a substantial leap forward in achieving those goals, particularly in environments that require rapid data processing and real-time analysis. This development aligns with the ongoing industry shift towards more distributed computing models and edge AI functionalities.

Furthermore, the strategic timing of this announcement is noteworthy. With the company’s IPO in China, it signals not only a robust market entry but also a commitment to engaging with a rapidly growing sector of tech enthusiasts and enterprises. Such initiatives are critical as they foster a greater variety of choices for companies in search of state-of-the-art AI solutions.

The architectural design of these chips has yet to be disclosed fully, but with previous experiences at Nvidia, the mastermind behind this venture has a track record of producing high-performance computing solutions that have reshaped industries. Insights on specific features and benchmarks will likely follow as the production date approaches, generating further anticipation within the tech community.

The ramifications of this release extend beyond technical advancements alone. Business leaders and investors may find this breakthrough attractive as it represents a strategic opportunity for partnerships and investments in AI technology. Companies relying on AI-driven insights can potentially leverage this new hardware to gain competitive advantages.

As businesses increasingly integrate AI into their operations, the importance of compatible and powerful infrastructure cannot be overstated. The ability to leverage clusters of ultra-efficient AI chips will open new avenues for innovation and enhance existing capabilities within organizations. For product builders and investors looking to capitalize on this trend, staying ahead of such developments will be crucial.

In summary, the introduction of these advanced AI chips, particularly with their vast cluster capabilities, stands as a testament to the ongoing evolution of artificial intelligence technology. The anticipated impact on data processing, machine learning training, and overall operational efficiencies presents a compelling opportunity for various stakeholders within the industry.

As the company gears up for mass production and continues to unveil further details leading up to 2026, all eyes will be on its progress. The intersection of AI technology and entrepreneurial spirit highlighted by this debut certainly promises to reshape the landscape of artificial intelligence and technology solutions.

-

How AI Is Rewriting the Healthcare Playbook

As the healthcare landscape continues to evolve, the integration of artificial intelligence (AI) is proving to be a game-changer. Faced with mounting complexities and labor pressures, health systems are increasingly turning to AI technologies to make faster, more reliable decisions. The need for innovation in this sector has never been greater, and AI is stepping up to meet those demands.

One of the most significant advancements being made is the use of AI to predict hospital operations. Traditional analytics often struggle to handle the myriad of variables that influence healthcare delivery. However, GE HealthCare’s Digital Twin technology redefines how hospitals manage these dynamics by creating virtual replicas of operations. This allows healthcare leaders to explore different scenarios, understand patient flows, and anticipate staffing needs before crises emerge.

The implementation of Digital Twins has led to remarkable improvements at institutions like Children’s Mercy Kansas City. For instance, the senior vice president and chief nursing officer, Stephanie Meyer, notes that this technology has helped the hospital prepare for surges in demand. By simulating potential increases in patient volume and testing staffing changes, hospitals can identify bottlenecks and mitigate them before they disrupt the patient care continuum.

Moreover, AI is rapidly advancing the capability of hospitals to make real-time operational adjustments. These digital tools can be deployed within months instead of years, relying on existing operational data to inform their simulations. This agility not only enhances operational efficiency but also allows healthcare leaders to make more informed decisions regarding capital planning and resource allocation.

In addition to enhancing predictive capabilities, the convergence of AI and cloud technologies is driving significant improvements in operational intelligence and financial performance. Cloud infrastructures empower health systems by aggregating operational, staffing, and clinical data, enabling continuous, real-time AI modeling. GE HealthCare’s Command Center exemplifies this effective utilization, allowing healthcare providers to project inpatient census levels, spot staffing shortages, and monitor bed capacity across their networks with unprecedented accuracy.

These innovations have tangible benefits. Reports indicate that some AI tools can achieve accuracy rates exceeding 90% in their predictive capabilities. This high level of precision allows hospitals to proactively tackle potential congestion issues and address delays in care. The financial implications of such efficiencies can lead to substantial cost savings while simultaneously improving patient outcomes.

Beyond just operational enhancements, AI is also personalizing patient care on a broader scale. For instance, health systems are employing multimodal models that analyze various data types to tailor cancer treatment plans. This individualized approach not only improves patient satisfaction but also fosters better healthcare results.

As artificial intelligence continues to reshape the operational paradigm of healthcare, hospitals that embrace these technologies will likely lead the pack in terms of efficiency, quality of care, and patient satisfaction. The integration of AI not only serves the immediate needs of hospitals but also positions them for future challenges as they continue to navigate the complex landscape of modern medicine.

In summary, the ongoing transformation fueled by AI in healthcare is indeed rewriting the playbook for how systems operate and deliver care. The convergence of predictive technologies and cloud solutions stands to reshape not only the financial viability of hospitals but also the quality of care provided to patients. As these technologies develop further, their potential to drive radical improvements in healthcare will only increase.

-

AI-Driven Convenience Is Replacing Traditional Shopping for Consumers

As digital commerce evolves, consumers are increasingly gravitating towards seamless purchasing journeys facilitated by artificial intelligence (AI). A recent PYMNTS Intelligence survey involving 1,425 adult consumers in the U.S. illustrates a significant shift in shopping behavior: over half of the respondents expressed a preference for completing purchases within an AI platform rather than being redirected to a traditional retailer’s website. This change underscores the growing trust in AI-powered shopping experiences and indicates a willingness to trade conventional browsing for enhanced convenience and personalized service.

The survey results reveal that 52% of AI users favor in-platform purchases, contrasting closely with 48% who prefer being directed to merchant sites for transactions. This near-even split marks a departure from prior eCommerce norms where product discovery and checkout were primarily tied to dedicated retailer platforms. The preference for integrated AI shopping experiences suggests that consumers are ready to embrace a more streamlined approach to online shopping, minimizing the typical friction involved in multi-site navigation.

Today’s AI solutions are evolving beyond simple recommendation engines and growing into comprehensive end-to-end commerce interfaces. This development allows consumers to conduct shopping more fluidly, reducing their desire to exit these AI environments. The trust consumers place in AI is deeply intertwined with the platforms’ ability to provide relevant product suggestions, compare options effortlessly, and anticipate needs, thereby enhancing the overall shopping experience.

Interestingly, the findings also reveal that practical incentives significantly influence consumers’ willingness to engage with AI-native commerce. Approximately 70% of AI users stated they would be amenable to seeing sponsored products in AI-generated lists if these resulted in exclusive discounts. This inclination showcases how value defines consumer expectations in the realm of AI-driven shopping.

Similarly, a compelling offer like free shipping reinforces this trend. The survey shows that about seven in ten AI users are open to accepting sponsored entries in exchange for free shipping, whereas less than 30% prefer to see ad-free results even without such benefits. This pattern indicates that consumers have a pragmatic approach to advertising in these new shopping interfaces, provided that the payoff is tangible and immediate.

Another critical factor driving interest in AI utilities is personalization. The survey points towards a growing appreciation for tailored shopping experiences that resonate with individual consumer demands. When consumers are presented with options that reflect their preferences and past behavior, their probability of purchasing significantly increases. AI’s capability to curate these personal experiences is a pivotal element in redefining customer engagement.

The implications of these findings for retailers and investors are profound. Marketers and business owners must recognize this shifting landscape and modify their approaches to capitalize on the increased comfort consumers have with AI-integrated shopping. As AI becomes an integral part of the shopping ecosystem, traditional eCommerce strategies may need to be reevaluated in favor of models that emphasize AI-driven interfaces where convenience, personalization, and value markedly enhance the consumer experience.

In conclusion, the transition towards AI-driven shopping presents both opportunities and challenges. For businesses willing to adapt and leverage advanced technologies, the potential for growth is enormous. Retailers can engage their audiences more effectively by integrating AI into their commerce strategies and streamlining the purchasing journey, creating a win-win for consumers seeking convenience and businesses aiming to thrive in a competitive market.

-

VCI Global Expands Upstream into Energy Infrastructure With Up to 250MW Solar Initiative Positioned to Supply AI Data Centres in Malaysia

The transition to sustainable energy solutions is gaining momentum globally, and VCI Energy, a subsidiary of VCI Global Limited, is leading the charge in Malaysia with an ambitious new initiative. On December 19, 2025, VCI Energy announced their plans to develop a utility-scale solar photovoltaic platform capable of delivering up to 250 megawatts of clean energy. This initiative is particularly noteworthy not only for its renewable energy focus but also for its strategic alignment with the burgeoning needs of artificial intelligence (AI) data centres in Malaysia.

In partnership with DPS Energy Sdn Bhd, a key player in the renewable energy sector and a subsidiary of DPS Resources Berhad, this project will span approximately 600 acres of land at Lipat Kajang, Mukim Sungai Siput, Malacca. The Memorandum of Understanding (MoU) formalizes a collaboration aimed at building a robust infrastructure that directly supports the anticipated surge in energy demand from AI and cloud computing applications.

The significance of this initiative cannot be understated, as it represents a crucial stepping stone for VCI Global in extending its influence within the energy sector. By entering the renewable energy market with a substantial solar project, VCI is poised to enhance its value proposition within the data centre ecosystem, addressing the critical energy requirements of digital infrastructure that is rapidly evolving to accommodate AI technologies.

This solar power platform is designed with scalability and future viability in mind. It is anticipated that the facility will produce between 350 and 450 gigawatt-hours (GWh) of electricity annually. These figures reflect solid engineering estimates based on solar irradiation and expected capacity utilization, which will be further optimized through long-term power purchase agreements. Moreover, the project’s capacity to integrate future battery energy storage systems signifies VCI’s commitment to remaining agile in an ever-changing energy landscape.

The market dynamics driving this initiative are compelling. With the increasing demand for energy from data centres—largely fueled by the growth of AI workloads—VCI Global’s project is strategically positioned. This demand creates a significant opportunity for VCI to establish dedicated energy offtake agreements with various stakeholders, including utilities and industrial users, which could lead to long-lasting business relationships. Furthermore, VCI has noted strong commercial interest in adjacent AI infrastructure developments, indicating robust market confidence in this energy-first approach.

Financially, the project is projected to attain a valuation of approximately USD 200 to 300 million upon full development, reflecting industry-standard metrics for large-scale solar assets. With an annual revenue potential ranging from USD 18 to 24 million, the implications for VCI Global are significant—both in terms of immediate cash flow and long-term financial stability. Over a 20-year operational horizon, the cumulative contracted revenue could reach between USD 360 to 480 million, contingent upon regulatory approvals and favorable tariff structures.

This venture is also in alignment with Malaysia’s national policies aimed at fostering advancements in AI and energy infrastructure, setting the groundwork for long-term economic benefits within the region. By contributing to the nation’s energy needs while simultaneously supporting the digital economy, VCI Global exemplifies a forward-thinking approach to renewable energy and technological integration.

In summary, VCI Global’s initiative not only underscores the company’s commitment to renewable energy but also highlights the increasing intersection of energy solutions and advanced technologies like artificial intelligence. As the demand for sustainable power escalates, VCI is positioned at the forefront of this critical industry, shaping the future of both energy generation and digital infrastructure in Malaysia. This strategic expansion marks a pivotal move as VCI Global reinforces its role in the global transition toward a greener, more integrated digital economy.

-

SG-based Startup Galatek Raises $30M Series A to Accelerate AI-powered Automation in Life Sciences and Semiconductor Manufacturing

In a significant move for the tech landscape, Galatek, a pioneering startup based in Singapore, has announced that it has successfully raised approximately $30 million in Series A funding. This strategic infusion of capital aims to accelerate the development of innovative artificial intelligence (AI) and automation solutions specifically tailored for the life sciences and semiconductor manufacturing sectors. As the demand for intelligent automation solutions continues to rise, Galatek positions itself at the forefront of this transformation by strengthening its global supply chain and enhancing local expertise across key markets.

Founded in Singapore’s vibrant innovation hub, Galatek is addressing some of the most critical challenges facing industries today. Through its advanced automation and AI solutions, the company is reshaping smart laboratory environments and advancing semiconductor packaging techniques, two vital sectors that rely heavily on precision and intelligent design. The startup’s approach combines agile research and development capabilities with localized commercial efforts, establishing itself as a key player in the rapidly evolving AI landscape.

Galatek’s focus is strategically placed on two high-demand market areas: life sciences and semiconductors. In the realm of life sciences, Galatek is leading the charge toward the smart laboratory of the future. By deeply integrating AI into research and development workflows, the company aims to empower scientists to significantly accelerate and de-risk discovery across a variety of complex applications. These applications include diagnostics, next-generation sequencing, 3D cell and organoid culture, drug development, and synthetic biology.

One of the standout offerings from Galatek is its Abio software platform, an integrated, AI-driven solution that unifies electronic laboratory notebooks (ELN), laboratory information management systems (LIMS), scientific data management systems (SDMS), and advanced automation. This comprehensive platform transforms scientific data into actionable insights, streamlining robotic integrations and supporting customizable, cloud-agnostic operations within smart labs. Furthermore, Galatek has established strategic partnerships with leading global pharmaceutical companies and prestigious research institutions, including collaborations with the National University of Singapore, enhancing its capability to co-create next-generation smart laboratories.

In the semiconductors sector, Galatek’s commitment to AI-driven innovation is evident through its focus on advanced packaging. The company integrates vision AI and motion control algorithms to meet the rigorous demands for precision and reliability in semiconductor processes. Galatek provides a range of essential process equipment and solutions, from front-end metrology and inspection to back-end advanced packaging. Some of its core offerings include fully automated overlay measurement systems, deep learning-based automatic optical inspection (AOI) systems, and precision wafer dicing equipment. The startup is also collaborating with several leading global semiconductor manufacturers, showcasing its capabilities from technological breakthroughs to commercial scale delivery.

Galatek operates under a unique business model described as “Global Supply Chain + Localized Service,” which effectively combines competitive advantages from both local and international operations. This model not only amplifies Galatek’s operational efficiency but also enhances its ability to deliver tailored solutions to meet the needs of its diverse client base across various regions.

As the landscape of AI and automation technology evolves, Galatek exemplifies the drive to innovate within sectors that are critical to future advancements in science and technology. The company’s successful funding round will bolster its mission to revolutionize the life sciences and semiconductor industries by providing cutting-edge automation and AI solutions that are essential for advancing research, speeding up production processes, and ultimately facilitating remarkable discoveries.

-

Amazon taps an AWS veteran to lead new AI, chip and quantum group

Amazon is embarking on a strategic initiative that represents a significant consolidation of its advanced technology efforts, bringing together artificial intelligence (AI), custom silicon, and quantum computing under a new organizational framework. This move is led by Peter DeSantis, a seasoned Amazon Web Services (AWS) executive, following an internal memo from CEO Andy Jassy.

This reorganization is not merely a shift in titles; it symbolizes an important juncture for Amazon as it aims to leverage these technologies to enhance and evolve the customer experience significantly. By amalgamating its existing Nova AI models, artificial general intelligence (AGI) research, and in-house silicon programs—comprising Graviton, Trainium, and Nitro chips—alongside quantum computing initiatives, Amazon is poised to accelerate its innovation trajectory in a fiercely competitive market.

Peter DeSantis’s appointment to lead this newly formed group reflects his extensive experience at Amazon, where he has spent over 27 years. DeSantis’s pivotal role in creating and scaling AWS has been instrumental, particularly during the launch of AWS Elastic Compute Cloud in 2006. His leadership further expanded into overseeing vital infrastructure services such as storage, networking, and monitoring. DeSantis was also crucial in establishing Amazon’s custom silicon strategy, particularly following the acquisition of Annapurna Labs in 2015.

This strategic realignment underscores Amazon’s position as a leader in the digital marketplace, ranking first in Digital Commerce 360’s Top 2000 Database—and third in the Global Online Marketplaces Database. The company’s ongoing commitment to integrate cutting-edge technology is evident in its priority to enhance customer engagement and service delivery through advancements in AI and machine learning.

In the new AWS AI leadership structure, DeSantis will have oversight over teams that include significant ongoing projects and services, while other leadership roles remain stable with additional responsibilities. This suggests a careful and structured approach to leadership continuity, which is vital in a fast-evolving technological landscape. Further announcements regarding AWS’s organizational structure are anticipated from Matt Garman, the AWS CEO.

Significantly, as part of the restructuring, Pieter Abbeel, a notable AI expert and co-founder of robotics company Covariant, has been appointed to spearhead Amazon’s frontier model research team within the new AGI organization. Abbeel’s continued involvement with Amazon’s robotics unit highlights the interconnectedness between robotics and AI, which is a cornerstone of Amazon’s technological aspirations.

The leadership transition occurs in the wake of notable developments, such as the unveiling of Amazon’s Nova 2 models during the recent AWS re:Invent, reflecting an increased investment in AI infrastructure. These developments are crucial as demands for robust AI services grow in various sectors, including consumer and enterprise spaces. Furthermore, the anticipated release of new generations of Graviton and Trainium chips positions Amazon at the cutting edge of AI hardware technology.

As a part of these transitions, Rohit Prasad, who has notably led the AGI organization and was key in developing Amazon’s Alexa, will exit the company by the year’s end. His tenure has been marked by significant contributions to Amazon’s foundation models, which countless businesses now rely on. This change in leadership could usher in new directions for AGI research at Amazon, particularly through strategic collaborations and innovations that align with the evolving technology landscape.

Overall, Amazon’s restructuring towards a focused AI, chip, and quantum group indicates an ambitious forward momentum in its technological endeavors. The strategic consolidation aims at enhancing coherence across AI models, silicon integration, and cloud services, signifying Amazon’s intent to expand its offerings across consumer, enterprise, and cloud markets effectively. As Amazon continues to innovate and improve its technology portfolio, the implications for businesses using AWS services are vast, promising greater efficiencies and advanced capabilities in utilizing AI and cloud technology.

-

AI data centers are eating the memory industry, and it could hurt your wallet

The recent earnings report from Micron Technology, a leading memory manufacturer, has revealed a striking trend that may have significant implications for consumers and businesses alike. As reported, Micron exceeded expectations in both revenue and profit for the first quarter but warned of a potential shortage in the broader memory market due to increasing demand from AI data centers.

Micron specializes in producing DRAM (Dynamic Random-Access Memory) chips essential for various computing applications. With the explosive growth of data center construction in the United States, particularly for supporting AI technologies, there is an unprecedented rush for Micron’s high-bandwidth memory (HBM) chips. This surge in demand has resulted in HBM being sold at a premium, causing a ripple effect that adversely affects the availability of DRAM for consumer products.

One of the critical issues stemming from this trend is that DRAM is not only a vital component for AI systems; it is also crucial for consumer electronics such as smartphones, laptops, and even vehicles. The shift in focus by memory suppliers towards AI-related products means that traditional markets, which rely on types of DRAM like double data rate memory (DDR), face potential shortages. This increased concentration on data center requirements could subsequently inflate prices for everyday consumers, limiting access to these essential components.

In a significant move, Micron has announced its exit from the consumer memory business to concentrate solely on supplying memory to AI data centers. This strategic pivot is driven by the higher profit margins associated with HBM production, but it poses a considerable threat to consumers who depend on affordable computer hardware.

As industry experts point out, this shift will not only impact consumer pricing but will also resonate across a variety of sectors ranging from personal electronics to industrial machinery. Ryan Reith, a vice president at IDC, emphasized during a recent interview that consumers will feel the financial burden as prices soar—an unwelcome reality driven by the memory crunch.

The consequences of this memory shortage are becoming more evident. Companies such as Samsung and SK Hynix have already begun increasing the prices of memory components, with reports indicating price hikes of up to 60% in some product segments. Tools like PCPartPicker demonstrate this phenomenon, as some memory product prices have surged from around $100 to nearly $450. Such fluctuations in cost raise alarm bells for manufacturers, particularly those producing low- to mid-range systems, which have limited pricing flexibility.

As consumers struggle to navigate these rising prices, it is essential for industry leaders and investors to keep a close eye on these market dynamics. The tech landscape increasingly relies on AI and data-centric applications, but the toll this trend takes on memory availability and pricing can not only curb innovations in consumer electronics but may also hinder overall economic growth.

Looking ahead, the relationship between AI data centers and the memory industry’s supply chain needs to be closely monitored. Industry players are advised to prepare for a continuing imbalance in demand and supply, as the focus on AI systems shows no signs of waning. As sectors prepare for the future of technology, understanding these memory-related challenges will be crucial for making informed business decisions.

Ultimately, while the push towards AI advancements offers exciting opportunities, the accompanying strain on traditional markets serves as a reminder of the complexities involved in this technological evolution. Navigating these shifts will be key for business leaders and investors aiming to remain competitive in an ever-evolving marketplace.

-

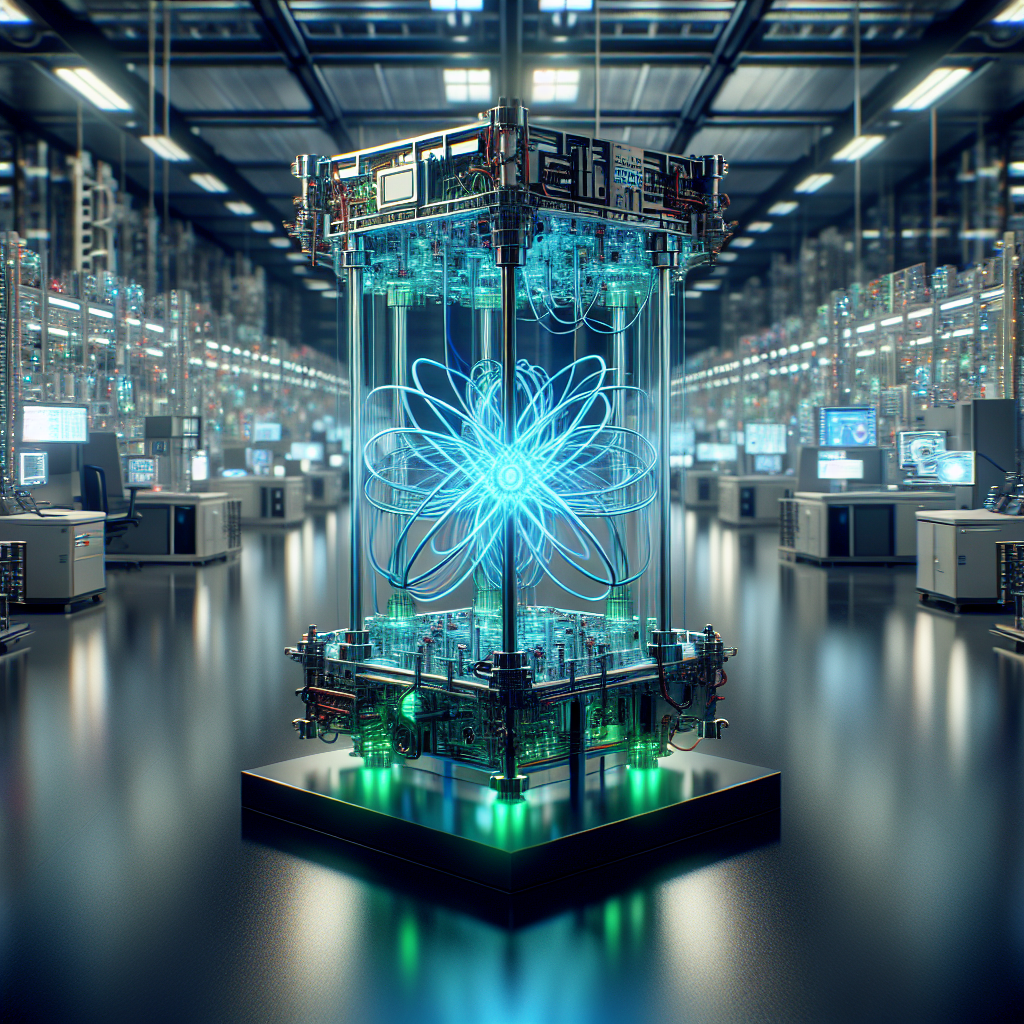

New MIT tech could lead to longer lasting devices and more capable AI systems

As the demand for artificial intelligence (AI) and resource-intensive computing continues to surge, so too does the energy demand that accompanies these technological advancements. Researchers at the Massachusetts Institute of Technology (MIT) have risen to the challenge by developing an innovative technique for stacking transistors and memory directly on the back of computer chips. This groundbreaking approach holds the promise of significantly reducing energy costs associated with AI and high-performance computing.

Conventional chip designs have long hindered efficiency, primarily by separating logic and memory components. This traditional method forces data to shuttle back and forth between these distinct components, resulting in substantial inefficiencies and excessive energy consumption. MIT’s new technique not only addresses this inefficiency but also marks a significant leap towards more energy-efficient designs in future electronic devices.

What sets this research apart is the new method’s ability to stack these vital components together on the backside of the chip, creating a more integrated and compact system. These developments contrast sharply with existing technologies such as Intel’s Lunar Lake and Apple’s M-series system-on-chips (SoCs), where sensitive transistors are built on one side of a silicon chip, leaving the opposite side reserved formally for wiring. As the complexity of adding more components grows, traditional fabrication approaches have struggled, primarily due to the prohibitive heat needed to fuse additional layers without damaging existing circuits.

MIT’s approach, spearheaded by researcher Yanjie Shao, overcomes these barriers through a novel low-temperature fabrication process. Utilizing a unique material known as amorphous indium oxide, the team successfully grew ultra-thin transistor layers at just 150 °C (302 °F). This temperature is sufficiently low to protect and preserve the integrity of the underlying circuits.

The breakthrough enables the stacking of active transistors directly onto the back end of the chip, merging both logic and memory functionalities into a single, compact vertical stack. This approach not only conserves space but enhances energy efficiency, paving the way for versatile electronics in ever-smaller devices. “Now, we can build a platform of versatile electronics on the back end of a chip that enables us to achieve high energy efficiency and many different functionalities in very small devices,” Yanjie Shao emphasized. However, he also noted the importance of continued innovation to fully uncover the ultimate performance limits of this promising technology.

Furthermore, the research team has improved upon existing transistor designs by incorporating a ferroelectric material known as hafnium-zirconium-oxide to formulate 20-nanometer transistors. Initial testing of these devices has shown remarkable results, with exceptionally swift switching speeds recorded at just 10 nanoseconds. Notably, this is the limit of the measurement equipment used by the team, indicating the impressive potential of their newfound designs.

The implications of this research extend beyond just technical specifications; the potential commercial upside is considerable. As industries increasingly rely on more powerful AI systems and data-driven technologies, lowering their energy consumption could translate into significant cost savings and improved sustainability practices. For business leaders, product developers, and investors, the advancement of MIT’s new transistor stacking technique represents a noteworthy step toward resolving the growing energy concerns surrounding AI applications.

In conclusion, the groundbreaking work being done at MIT not only showcases a novel fabrication technique that stacks transistors and memory but also reinforces the crucial relationship between energy efficiency and technological advancement. This innovation aligns with the general direction of the electronics industry, which seeks to develop more capable systems that are environmentally friendly and economically viable for future applications. As researchers continue to push the boundaries of performance and efficiency, the developments at MIT may very well shape the future landscape of AI and hybrid computing technologies.